Before the advent of the high end PC and Mac with all the wonderful graphics tools anyone doing any game like programming would be more than accustomed to used some pencil and paper tools to build their graphics. Small square lined paper was the main tool there. Shading in individual little pieces for 16×16 sprite. Usually then converting the rows into the binary, then hexadecimal values that would drop into the data structure to the make the on screen character. Pixel art is still a big thing though and a genre in its own right.

It was interesting to see the emergence of this game making/editing toolkit that abstracts that graph paper a little, not dealing so much with pixels but with larger block and constructs in a game environment. Family Gamer TV posted this video showing it in action.

It is the physical nature of the building and configuration that makes this different to the regular point and click builds, though it can be used for that too. Having a large number of plastic blocks in different colours means kids, or adults for that matter, can gather around the “graph paper” and chop and change their design on the table top. It is not totally clear how you go back to your source code though. Generally building something you have the base components always there. Here you will clear the rack and start again on the next one. Now if it could 3d print you the “source” if you wanted to start editing from a point in someone else’s rig that would be truly awesome.

It seems that Bloxels are doing something right as they can now only ship in the US due to a lack of inventory. I think it might make an interesting change and tool in primary schools though it suggests ages 8+ I know kids younger than that would get the concept pretty quickly.

One thing with graph paper, back in the day, if you knocked it on the floor you still had your design!

A free offering for World Choi Kwang Do week

This week is, for our martial art, Choi Kwang Do, starting to be known as World CKD week. Primarily that is because March 2nd is the anniversary of Grandmaster Choi founding the art, originally in 1987. The week is being themed with the tag line Science meets Martial Arts. This is one of the main reasons Choi Kwang Do works for me. In class we learn, practice and teach things based on the reason they work.

I have written about our family’s martial art of choice many times and my exploration of technology in the art and how I arrived at the art via technology and serendipity too. There is also the more formal article in my writing portfolio about Virtual athletes

All this has led to Choi Kwang Do being a huge part of our family life and we have made so many good friends through it. There is a bond we all feel in the positive spirit of the art. It was shown this weekend as we celebrated with Master Scrimshaw the 5th Anniversary of BasingstokeCKD Our dohjang was full on Saturday with fellow students from Basingstoke, but also some good friends, old and new from other schools. We had black belt tag grading, colour belt grading, an incredible set of routines to go through in class and then a great social event with food and cakes. It was incredibly uplifting, and an ideal lead into World CKD week too!

To celebrate this World CKD week I have made Cont3xt free to download. It has an awful lot in it, pivotal to the story inspired by Choi Kwang Do as an art and a state of mind. It fits with the Science (Fiction) meets Martial Arts tagline for the week. Whilst I am doing this to encourage my fellow practitioners to see ways we can introduce Choi Kwang Do in many different ways, and as a way of saying thank you to them all there will of course be other people able to download and experience the books for free. All my author bio’s mention Choi Kwang Do.

We pledge Humility and Integrity, amongst other things, in the art. So promotion of one’s own work like this could feel a little uncomfortable. However I really want to share how CKD inspired elements fit into a science fiction techno thriller in a very positive way. It was the just getting on with it unbreakable spirit that we learn, that even got me to write these two books.

As Cont3xt is the follow up book I have also made Reconfigure free for the week too. The martial arts arrives in Cont3xt but not in the way you might think to start off with, in Reconfigure (book 1) Roisin has no such skills, but the book is there for free too for completeness.

I hope a few people get a chance to take a look, maybe even pop a few stars of reviews on Amazon. I would also love for someone to have read the Cont3xt, who doesn’t do CKD yet, and to look up the art , find their nearest school and start to train. It’s a long shot, but every person in the World is a potential student to join us, have some fun and learn something really useful about themselves.

Choi Kwang Do is practical self defence, but we aim to never need to use it, and we also don’t fight and hurt our fellow students via competition and sparring. I believe the quote is “It is better to be a warrior in a garden than a gardener in a war.”

So there we have it, the books are available free on Amazon, to be used in whatever way works for whom so ever needs it. Cont3xt and Reconfigure here.

Pil Seung! (Certain victory)

Real Steel – In toy form – Big Robots

Controlling robots directly with your own physical motions is something we see a lot in sci-fi. Films like Real Steal have battling bots tearing one another apart in the ring. As a kid I had a version of the battling robot boxing game. Rock ’em Sock Em was the main contender. It had mechanical bots on the end of some push rods. The robots were locked into the boxing ring. Thumb presses aimed to hit the other bot square on the chin lifting his head up for the win. My boxers were free moving versions, but looked like real people. The trolley wheels on the base of the 12 inch figures meant lots of positioning and jostling as you tried to free move and get those punches in. Of course all that went out of the window when the digital fighting games arrived and Street Fighter et. all blew away the physical fighting toys.

Now the bots are fighting back and @FamilyGamerTV has some coverage here of motion controlled free moving fighting bots that are soon to hit the shelves. The video explains it all, but it seems the motion is directional, hooks and upper cuts, not just the thumb pressing single motion of the old fashioned fighting bots.

Big Robots, as they are not so imaginatively called, add a little spice to the radio control market. Now if we could just make them a little bigger we would be ready to fight Godzilla when he walks out of the ocean Pacific Rim style.

Self driving cars – The concept of electric opens the way

I attended a lovely wedding on Friday. It was one that I did not really know anyone at, except @elemming of course. We drove to Chorley Wood, but took the petrol car as it was about 100 mile round trip. The Leaf could make it with a splash and dash charge, but it was not worth the extra hassle. We sat in a very long M25 traffic jam getting there, 50 mile in 2 hours. Coming home late that night the M3 was closed for roadworks so we had a bit of detour to Reading in order to get back to Basingstoke. That experience is a very common one on our road system here in the UK. Petrol guzzling engine blocks sat almost motionless in a long queue. As I sat in the jam I thought how the electric Leaf would not be using any power at all sat still, but also that if all these cars were computer controlled there would be no jam, as efficient network algorithms would get us all where we needed to go, as long as everything was able to talk to everything else.

Oddly, we gave some people a lift form the church to the reception. In the few minutes drive our electric car came up in conversation. People are still intrigued, it is still early adopter territory, but in a well understood space. How does it work, how much does it cost, are they really that fast? etc. I am a tech evangelist so I love sharing this sort of information.

The subject of Tesla came up too. Elon Musk and his wide ranging and World changing innovations became the topic of the continuing chat. In particular we talked about self driving cars. It was talked about, not in a laughing at the concept way, but in a how long before they do. I mentioned the fact that Tesla’s were already patched over the air, like an iPhone app would be, and had some basic extensions applied to them to enable self driving features. Once again this did not seem odd to anyone in the car.

It seems that the reality of an electric car, real people owning real ones and using them, makes a dent in the automobile paradigm. It’s electric, therefore it is probably all ‘computery’ and of course it will be on the Internet as a composite Internet of Things device. That may be a terminology step to far for someone not in the industry, but the principle is there in people’s minds.

A petrol car is stuck, tethered to a petrol pump, constantly pouring pounds into it. It is heavy and lumbering, resistant to change. It is like a telephone box on the street. The electric car is more like a wifi enabled, 4G smartphone. It can do way more than just make calls. After all if you are going to completely change how a vehicle works, and see that it does, why not change everything else around it, including who drives it.

This morning on the BBC news Ford were at the Mobile World Congress. They were explaining they were not longer just a car maker, but a platform maker. When asked when they would have full self driving cars the answer was that they already have some assistance features (which are like the Tesla) and that they had not set a date for a Level 4 fully autonomous vehicle yet, but when they did it would be mass market.

It was the first time I had heard the term Level 4. Wikipedia came to my aid on this one.

From https://en.wikipedia.org/wiki/Autonomous_car

In the United States, the National Highway Traffic Safety Administration (NHTSA) has proposed a formal classification system:[14]

Level 0: The driver completely controls the vehicle at all times.

Level 1: Individual vehicle controls are automated, such as electronic stability control or automatic braking.

Level 2: At least two controls can be automated in unison, such as adaptive cruise control in combination with lane keeping.

Level 3: The driver can fully cede control of all safety-critical functions in certain conditions. The car senses when conditions require the driver to retake control and provides a “sufficiently comfortable transition time” for the driver to do so. Example: Tesla Model S

Level 4: The vehicle performs all safety-critical functions for the entire trip, with the driver not expected to control the vehicle at any time. As this vehicle would control all functions from start to stop, including all parking functions, it could include unoccupied cars.

An alternative classification system based on five different levels (ranging from driver assistance to fully automated systems) has been published by SAE, an automotive standardisation body.

It is interesting that we have such a leap in levels. The move from 3 to 4 is huge if you think about it. If we were starting the road system from scratch now, we might just dive into Level 4. Dedicated lanes, less complexity and adversity for the computers to have to cope with. Now though we have a mixed system. Any level 4 car will have to cope with all the existing Level 0 drivers and a world built for them. e.g. a full Level 4 system would not need traffic lights. Cars could interleave at junctions with an automated flow system.

As you can see just form a wikipedia article, even the standardisation of the level numbers has not occurred. How and where the massive automotive corporations are going to collaborate on communications standards across the vehicles is going to be interesting. The pressure on the software industry to create realtime systems that do not fail at all is also going to be high. All our computers, phones etc crash. They need a reboot here and there. That doesn’t matter so much sat at your desk, but in a car hurtling at 70mph+ in an environment where lots of the other cars are still Level 0 and have human driver quirks to deal, and not having any software problems and actually crashing with is no mean feat.

As a long time software engineer, we used to have a long lead time in testing. Once deployed changes tweaks did not happen. Fixes were bundled and applied to big central systems but you tended to have to get it correct first time. Now we are in a permanent patch environment. This is great as things can improve over time, but also it can cause an attitude in engineering and the pressures to hit deadlines, that it is OK we can patch it later over the Internet.

I wonder what is going to happen to the automotive industry, and the things around it. The diversity of car design, engine performance and general handling all feature heavily in shows like Top Gear and whatever Amazon’s reboot of it will be called. If our vehicles just become self driving taxis will we still try and show off our design choices and apparent status with them. Will a custom car be nothing more than a large iPhone case? There are some huge social implications in how we feel about cars and what we do in them. A car will be an office, full attention can be given to phone calls or emails, maybe even just donning you VR headset for a virtual meeting on a nice simulated desert island rather than watch the motorway sidings zoom past.

It is definitely an area that will impact all our lives and is another exciting, and slightly scary one to consider.

Need For Speed – My stuff in their video

We like cars in this house. Car games are also a big favourite, naturally. I really enjoy the analogue nature of continuous adjustments as you hurtle around a track. Need for Speed has undergone a transformation over the years, it, and its genre, clearly influenced films like Fast and Furious and now it seems to have come full circle in the latest game. It feels like a side plot of the Vin Diesel epic action movies.

The racing and missions, the customisation and the heavy use of NoS are all pretty standard in this version of Need for Speed. I was surprised, though, to see live action cut scenes. These sort of acted out mini parts of the story, with real people, used to be something that was tried years ago, and generally failed. They did not feel part of the game. To go from a live action real world then blend back to a not quite so real digital view jarred. Also many times the acting was not all that. The alternative was only FMV, with a few digital overlays. That gave a lack of freedom, flicking to new video links at decision points.

This Need For Speed has a full on racing crew with all their baseball caps in reverse and dungarees in place. It feels interesting to hear them talk. Though it is still a little odd IMHO. What blew me away though was the car customisation. The principle is the same as in Forza. Using decals and colours, shapes and some basic tools to morph those, you are able to wrap your car and make it your own (or download someone’s hard work). This makes sense in the game engine it is just generated graphics, so why not? When a cut scene started in the garage and my custom car was in the full motion video though I gave a little cheer. The video show some of how it appears. The car has Reconfigure and Cont3xt written on either side, or course.

Digital compositing into live video is something that is hitting our TV screens in ways we may not ever actually notice. This is the first time I can recall it being so done in a game FMV in quite this way. They also don’t do it all the time, it is not a major feature they shout about. I had to do some tricky missions to try and find one that I could record that included this. It does work though. The car is really your avatar in the game, even though there is a first person camera view for your character in the FMV. I found it added to the experience, seeing my stuff in their video. Makes me want to make a film even more 🙂

Read the book Cont3xt available for download here

Excited tech celebs and a countdown – Meta #AR

There is a countdown running and people are showing their excitement. This time, not to an enclosed virtual reality headset but for a full computing platform Augmented Reality headset. It is Meta @metaglasses. I have talked about and written a lot (including the two novels!) about augmented reality and what it means when it is done properly. This teaser video features many luminaries of tech, pop culture and business evangelising the product based on the demo they have seen. Scoble seems particularly gushing about it. He posted an hours worth of that on Facebook after his intro demo, but as its all embargoed he could only talk generally.

With Hololens, Magic Leap and now Meta (assuming it is in the same category which at $600 – $3000 it will be) and of course my own fictional EyeBlend there is a lot going on that may leap frog the VR wave this time around.

This has been around and developing for a while. It was initially seeming to have to answer itself to Google Glass which was really just a heads up display not full AR. The early video show bug eye aviator inspired glasses but the latest pictures are more visor with a noticeable sensor bar.

will.i.am seems pretty interested in it to. As he says it opens up the possibilities for the arts. So maybe he will help me make the blended reality movie or series for Reconfigure?

This sort of kit goes past what we would call gaming and entertainment as it provides realtime feedback of the World and the Internet of Things. Helping us see the stuff we can’t see normally, but in situ. Again with Meta it will depend on how they build the physical model, or if they do, of the World in order to implement the digital in place views. Some earlier videos show the use of real world objects, a flat surface such as a box, being used as canvas tracking and a reference point for the digital content.

The countdown clock is ticking though, and the final hype is being ramped up. So it is exciting, however it turns out. Their countdown says 19 days left (best check the site as that is of course an out of date piece of text 🙂 )

Lucky 7 years – Feeding Edge birthday

Wow. It is seven years since I started Feeding Edge Ltd. That is quite a long while isn’t it? The past year has been a more difficult one with less work in the pipeline for most of it. It has meant I have had to take stock and look to do other things, whilst the World catches up. It does seem strange given the dawn of the new wave of Virtual Reality, and Augmented Reality that I have not managed to find the right people to engage my expertise and background in both regular technology and virtual worlds. In part that was because I was focussing on one major contract, when that dried up suddenly there was no where to go. It is starting from zero again to build up and sell who I am and what I do.

My other startup work has always been ticking along under the covers, as we try and work the system to find the right person with the right vision to fund what we have in mind. It is a big a glorious project, but it all takes time. Lots of no, yes but and even a few lets do it, followed by oh hang can’t now other stuff has come up.

On the summer holiday, I had a good long think about whether to give this all up and try and find a regular position back in corporate life, I was hit with a flash of inspiration for the science fiction concept. It was so obvious that I just had to give it a go. That is not to say I would not accept a well paid job with slightly more structure to help pay my way. However, the books flowed out of me. It was an incredibly exciting end to the year. Learning how to write and structure Reconfigure, how to package and build the ebook and the print version. How to release and then try and promote it. I have learned so much doing it that helps me personally, helps my business and also will help in any consulting work I do in the future. I realised too that the products of both Reconfigure and Cont3xt are like a CV for me. They represent a state of the virtual world, virtual reality, augmented reality and Internet of Things industry, combined with the coding and use of game tech that comes directly from my experiences, extrapolated for the purpose of story telling.

Write what you know, and that appears to be the future, in this case the near future.

This year I have also been continuing my journey in Choi Kwang Do. This time with a black suit on as a head instructor. It has led me to give talks to schools on science and why it is important, with a backdrop of Choi Kwang Do as a hook for them. I am constantly trying to evolve as a teacher and a student. Once again the reflective nature of the art was woven into the second book Cont3xt. I did not brand any of the martial arts action in the book as Choi Kwang Do as that may mis-represent the art and I don’t want to do that, but it did influence the start of the story with its more reflective elements, later on a degree of poetic licence kicked in, but the feelings of performing the moves is very real.

I have continued my pursuit of the unusual throughout the year. The books as a product provide, rather like the Feeding Edge logo has in the past, a vehicle to explore ideas.

I still really like my Forza 6 book branded Lambo, demonstrating the concept of digital in world product placement.

If you have read the books, and if not why not? they are only 99p, you will know that Roisin like Marmite. Why not ? I like Marmite, again write what you know. It became a vehicle and an ongoing thread in the stories, and even a bit of a calling card. It is a real world brand, so that can be tricky, but I think I use it in a positive way, as well as showing that not everyone is a fan. So the it is just another real world hook to make the science fiction elements believable. So I was really pleased when i saw that Marmite had a print your own label customisation. It is print on demand Marmite, just as my books are print on demand. It uses the web and the internet to accept the order and the then there is physical delivery. I know its a bit meta but thats the same pattern Roisin uses, just the physical movement of things is a little more quirky 🙂

I have another two jars on the way. One for Reconfigure and one for Roisin herself.

I am sure she will change her own twitter icon from the regular jar to one of these later as @axelweight Yes she does have a Twitter account, she had to otherwise she would not have been able to accidentally Tweet “ls -l” and get introduced to the World changing device @RayKonfigure would she?

All this interweaving of tech and experience, in this case related to the books, is what I do and have always done. I hope my ideas are inspirational to some, and one day obvious to others. I will keep trying to do the right thing, be positive and share as much as possible.

I am available to talk through any opportunities you have, anytime. epredator at feedingedge.co.uk or @epredator

Finally, last but not least, I have to say a huge thank you to my wife Jan @elemming She has the pressure of the corporate role, one that she enjoys but still it is the pressure. She is the major breadwinner. You can imagine how many 99p books you have to sell make any money to pay anything. She puts up with the downs whilst we at for the ups. Those ups will re-emerge, this year has shown that too me. No matter how bleak it looks, something happens to offer hope. I have some new projects in the pipeline, mostly speculative, but with all these crazy ideas buzzing around something will pop one day.

As we say in Choi Kwang Do – Pil Seung! which means certain victory. Happy lucky 7th birthday Feeding Edge 🙂

Using the Real World – IoT, WebGL, MQTT, Marmite, Unity3d and CKD

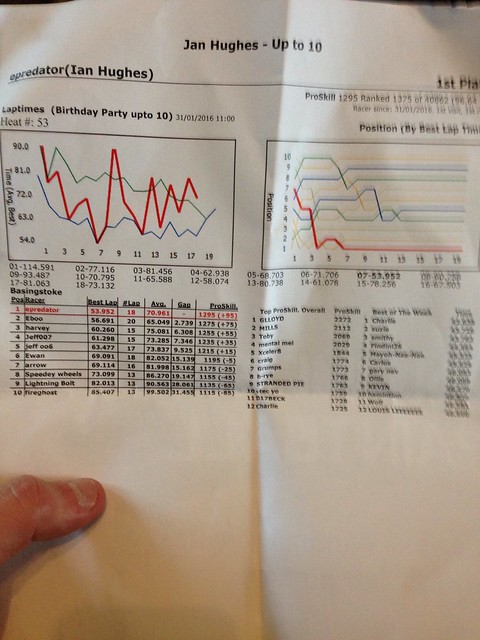

All the technology and projects I have worked on in my career take what we currently have at the moment and create or push something further. Development projects of any kind will enhance or replace existing systems or create brand new ones. A regular systems update will tweak and fix older code and operations and make them fresher and new. This happens even in legacy systems. In both studying and living some of the history of our current wave of technology, powered by the presence of the Internet, I find it interesting to reflect of certain technology trajectories. Not least to try and find a way to help and grow this industries, and with a bit of luck actually get paid to do that. I find that things finding out about other things is fascinating. With Predlet 2.0 birthday party we took them all Karting. There was a spare seat going so I joined in. The Karts are all instrumented enough that the lap times are automatically grabbed as you pass the line. Just that one piece of data for each Kart is then formulated and aggregated. Not just with your group, but with the “ProSkill” ongoing tracking of your performance. The track knows who I am now I have registered. So if I turn up and rice again it will show me more metrics and information about my performance, just from that single tag crossing the end of lap sensor. Yes that IoT in action, and we have had that for a while.

The area of Web services is an interesting one to look at. Back in 1997, whilst working on very early website for a car manufacturer, we had a process to get to some data about skiing conditions. It required a regular CRON job to be scheduled and perform a secure FTP to grab the current text file containing all the ski resorts snowfall, so that we could parse it and push it into a form that could be viewed on the Web. i.e. it had a nice set of graphics around it. That is nearly 20 years ago, and it was a pioneering application. It was not really a service or an API to talk to. It used the available automation we had, but it started as a manual process. Pulling the file and running a few scripts to try and parse the comma delimited data. The data, of course, came from both human observation and sensors. It was collated into one place for us to use. It was a real World set of measurements, pulled together and then adjusted and presented in a different form over the Internet via the Web. I think we can legitimately call that an Internet of Things (IoT) application?

We had a lot of fancy and interesting projects then, well before their time, but that are templates for what we do today. Hence I am heavily influenced by those, and having absorbed what may seem new today, a few years ago, I like to look to the next steps.

Another element of technology that features in my work is the ways we write code and deploy it. In particular the richer, dynamic game style environments that I build for training people in. I use Unity3d mostly. It has stood the test of time and moved on with the underlying technology. In the development environment I can place 3D objects and interact with them, sometimes stand alone, sometimes as networked objects. I tend to write in C# rather than Javascript, but it can cope with both. Any object can have code associated with it. It understands the virtual environment, where something is, what it is made of etc. A common piece of code I use picks one of the objects in the view and then using the mouse, the virtual camera view can orbit that object. It is an interesting feeling still to be able to spin around something that initial looks flat and 2D. It is like a drones eye view. Hovering or passing over objects.

Increasingly I have had to get the Unity applications to talk to the rest of the Web. They need to integrate with existing services, or with databases and API’s that I create. User logons, question data sets, training logs etc. In many ways it is the same as back in 1997. The pattern is the same, yet we have a lot more technology to help us as programmers. We have self defining data sets now. XML used to be the one everyone raved about. Basically web like take around data to start and stop a particular data element. It was always a little to heavy on payload though. When I interacted with the XML dat from the tennis ball locations for Wimbledon the XML was too big for Second Life to cope with at the time. The data had to be mashed down a little, removing the long descriptions of each field. Now we have JSON a much tighter description of data. It is all pretty much the same of course. An implied comma delimited file, such as the ski resort weather worked really well, if the export didn’t corrupt it. XML version would be able to be tightly controlled and parsed in a more formal language style way, JSON is between the two. In JSON the data is just name:value, as opposed to XML

Unity3d copes well with JSON natively now. It used to need a few extra bits of code, but as I found out recently it is very easy to parse a web based API using code and extra those pieces of information and adjust the contents of the 3d Environment accordingly. By easy, I mean easy if you are a techie. I am sure I could help most people get to the point of understanding how to do this. I appreciate too that having done this sort of thing for years there is a different definition of easy.

It is this grounding in real World pulling info data and manipulating it, from the Internet and serving it to the Web that seems to be a constant pattern. It is the pattern of IoT and of Big Data.

As part of the ongoing promotion of the science fiction books I have written I created a version of the view Roisin has of the World in the first novel Reconfigure. In that she discovers and API that can transcribed and described the World around her.

This video shows a simulation of the FMM v1.0 (Roisin’s application) working as it would for her. A live WebGL version that just lets you move the camera around to get a feel for it is here.

WebGL is a new target that Unity3d can publish too. Unity used to be really good because it had a web plugin that let us deploy applications, rich 3d ones, to any web browser not just build for PC, mac and tablets. Every application I have done over the past 7 years has generally had the web plugin version at its core to make life easier for the users. Plugins are dying and no longer supported on many browsers. Instead the browser has functions to draw things, move things about on screen etc. So Unity3d now generates the same thing as the plugin, which was common code, but creates a mini version for each application that is published. It is still very early days for WebGl, but it is interesting to be using it for this purpose as a test and for some other API interactions with sensors across the Web.

In the story, the interaction Roisin starts as a basic command line ( but over Twitter DM), almost like the skiing FTP of 1997. She interrogates the API and figures out the syntax, which she then builds a user interface for. Using Unity3d of course. The API only provides names and positions of objects, hence the cube view of the World. Roisin is able to move virtual objects and the API then, using some Quantum theory, is able to move the real World objects. In the follow up, this basic interface gets massively enhanced, with more IoT style ways of interacting with the data, such as with MQTT for messaging instead of Twitter DM’s as in the first book. All real World stuff, except the moving things around. All evolved through long experience in the industry to explain it in relatively simple terms and then let the adventure fly.

I hope you can see the lineage of the technology in the books. I think the story and the twists and turns are the key though. The base tech makes it real enough to start to accept the storyline on top. When I wrote the tech parts, and built the storyboard they were the easy bits. How to weave some intrigue danger and peril in was something else. From what I have been told, and what I feel, this has worked. I would love to know what more people think about it though. It may work as a teaching aid for how the internet works, what IoT is etc for any age group, from schools to boardroom? The history and the feelings of awe and anger at the technology are something we all feel at some point with some element of out lives too.

Whilst I am on real World though. One of the biggest constants in Roisin’s life is the like it or love it taste of Marmite. It has become, through the course of the stories, almost a muse like character. When writing you have to be careful with real life brands. I believe I have used the ones I have in these books as proper grounding with the real World. I try to be positive about everyone else products, brands and efforts.

In Cont3xt I also added in some martial arts, from my own personal experience again, but adjusted a little her and there. The initial use of it in Cont3xt is not what you might think when you hear martial art. I am a practitioner of Choi Kwang Do, though I do not specially call any of the arts used in the book by that name as there are times it is used aggressively, not purely for defence. The element of self improvement is in there, but with a twist.

Without the background in technology over the years and the seeing it evolve and without my own personal gradual journey in Choi Kwang Do, I would not have had the base material to draw upon, to evolve the story on top of.

I hope you get a chance to read them, it’s just a quick download. Please let me know what you think, if you have not already. Thank you 🙂

It’s alive – Cont3xt – The follow up to Reconfigure

On Friday I pressed the publish button on Cont3xt. It whisked its way onto Amazon and the KDP select processes. The text box suggested it might be 72 hours before it was life, in reality it was only about 2 hours, followed by a few hours of links getting sorted across the stores. I was really planning to release it today, but, well, it’s there and live.

I did of course have to mention it in a few tweets, but I am not intending to go overboard. I recorded a new promo video, and played around with some of the card features on YouTube now to provide links. I am just pushing a directors cut version. The version here is a 3 minute promo. The other version is 10 minutes explaining the current state of VR and AR activity in the real industry.

As you can see I am continuing the 0.99 price point. I hope that encourages a few more readers to take a punt and then get immersed in Roisin’s world.

Cont3xt is full of VR, AR and her new Blended Reality kit. It has some new locations and even some martial arts fight scenes. Peril, excitement and adventure, with a load of real World and future technology. Whats not to like?

I hope you give it a download and get to enjoy it as much as everyone else who has read it seems to.

This next stage in the journey has been incredibly interesting and I will share some of that later. For now I just cast the book out there to see whether people will be interested now there is the start of a series 🙂

Price, value, 0.99 and ego for authors

I originally posted this on Linkedin, but felt I should have it here too. Working out the best place to put things often includes some re-use/re-posting.

Ready to face the World on its own, growing up and leaving the nest.

It has been a steep learning curve, since September 2015, of writing, publishing and selling, this first novel Reconfigure. My initial surprise at managing to write it in a way that came out almost exactly as I had pictured it, was then met with the confusion of how to design, price and share it as a product. Each of the steps in that process I took decisions, some where more clear cut than others.

The price, of my precious creation, was the hardest. I thought that going in too cheap would make the work seem as if it was a throw away piece, published solely for reasons of ego. I thought if it was too expensive, it would of course not reach anyone, and how could I dare charge for something by an unknown writer. That was balanced a little by my own internal hype, that I felt I was not totally unknown. In reality in the sea of internet presences and products, we are all pretty much unknown, unless you count as a global multi-award winning superstar or win the viral lottery and have something to ride that wave with. They were not generally born with that popularity though. We are all just people.

People, many closer to me than not, seem to enjoy Roisin’s story. I have no idea what people from a wider more diverse circle feel about it, yet. I sit and worry about reviews, what if I get lots and they say it’s terrible. Equally I worry about people not reviewing because it is just not worth the bother to review. The book is not me though. Looking at it from it’s perspective, it is happy to be out there ready to be discovered. I have done what I needed to release the story. Now it is the books job to win people over. That way I can park this ego, or personal validation need, for downloads and sales and just try and help it along without it defining me.

That is all a bit deep, and potentially odd to hear. However, having written a second book I find my attitudes to its acceptance as part of the series to be different. I am going to send it out there, and whatever happens, happens. I can engineer serendipity a little, with mentioning its very existence, but I can’t make people want to read, or read it. If they want to they will. I do not have a corporate marketing budget to make it part of every waking moment in everyones lives. That would be horrible though!

Having run the free promotion days and seeing such a big difference in downloads, from the $2.99 book sales, it is clear that $2.99 is too much. That price point was slightly enforced by the structure Amazon sets. If you want to get 70% as a royalty you can only start pricing at $2.99, which becomes £1.99 in the UK, as a minimum rather than an exchange rate difference. As a new author, and doing all the work, my brain thought, “hey, 70% is a decent amount.” The other rate is 35%. For an ebook that seems woefully low. Yes there is storage of the data, the shop front etc., but Amazon are getting 65% at that low rate. My ego, not my business brain, felt that was wrong. So I couldn’t price any lower than $2.99. I think that may have been incorrect, and my heart leading my head. Getting the book out there is about volume, not individual profits. Whilst Amazon may be taking too much, IMHO, that is what they are taking, that is the playing field, the rules of the game. Amazon, of course, don’t actually care as a transport mechanism for my book or anyone’s. They thrive of volume, one product sold lots or lots sold of individual items. So self publishing might cut out a certain part of the middle man process, but it is not generally the creators of anything who gain the most financially.

With this in mind, I just made a chance and hit the button, not to go totally free and never get any financial recognition for the work, but instead to the enticing $0.99 or 99p in the UK and other regions. This may make a difference to volumes downloaded. With a second book, yet to be priced, it may entice two sales? Yes I have written twice as much, but 2 x 0.99 is better than 0 x $2.99 and I want people to just read it.

Will that make any difference? I have no idea. As with all these things there is an element of luck and timing, combined with anything related to the quality and the value of the work. It means I feel much more relaxed about Cont3xt now though. The first book, was as if I was buying a lottery ticket for the very first time. Excitement and nerves, hoping for a massive win. That could happen, and sometimes does, but most things require time and effort, presence and demonstration of ability in the field. I may have a long track record in emerging technology, social media, blogging, a bit of TV presenting etc. That does not entitle me to immediately burst onto the scene and expect critical and financial success.

With that new attitude, I can move forward and explore interesting story lines and ways to share them. The work is there if anyone needs it. It does not require my constant presence, it is a product, hosted and available 24/7. It is still my baby, as is Cont3xt, but they are growing up and leaving home. They still need my support, and they are very welcome of the support from everyone they have had so far too, as am I.

Reconfigure is available on Amazon in all regions as an ebook and paperback. Go and give it a try, and maybe write a review too?

Cont3xt, the follow up is out soon.