I could not resist this, we did not carve it in the end went for a more traditional look. Happy Halloween everyone.

Monthly Archives: October 2009

More iphone Radgoll experiments

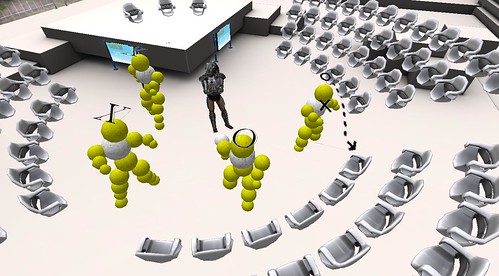

I carried on with a few ideas of what to do with the ragdoll version of my Evolver avatar and I ended up with this interesting behaviour. Which is sort of where I was heading. It is a form of emergent behaviour caused but the avatar being suspended by the two top spheres using a form of hinge. This combined with gravity and the bones and joints in the avatar lead it to create this dancing toy effect that is different each time it is run. Next is to wire the strings up to touch controls and then I have a full dancing avatar to play with on the iphone.

Iphone dancing on the ceiling

In my continued look at the quick things that can be done with a Mac, and Iphone, unity3d and some content (in this case my Evolver avatar) I thought I would see if the bones and animation worked ok and performed ok on the Iphone.

I was not being overly precise about defining the mappings of the ragdoll, but I did click on a few Kinematic check boxes to see what would happen.

This was the result. It does indeed animate itself, there is nothing I have put in other than asking for joints and physics it just dances away to itself.

It is amazing, the lengths we used to have to go to to make even the most basic animation happen, morphing 2d shapes on commodore 64’s and alike.

It feels to me that we have the potential for a huge bedroom coding movement now again. This was so quick to build and deploy that its hard to see why we all are not doing it.

Art, U2inSL and experiences in Second Life

My feeds today led me to look at a brilliant YouTube video that is over on New World Notes called Impressions by Willow Caldera. This video is wonderfully shot and shows a the very creative and expressive side of Second Life. It acts as a reminder (probably to those of us who preach about “business use” or who are starting to finally grok it all that there is much much more to all this than a bit of powerpoint and some business dressed avatars. I would recommend popping over to NWN and taking a look.

In following the links I saw this video too on the same channel about the U2inSL Warchild benefit gig.

U2 are back in the news again for using Youtube themselves (as opposed to this U2inSL who are a tribute act). However I still always manage to get U2inSL into any explanation of what this is all about.

There are several reasons for this.

1. Most people know who U2 are they are both part of the establishment and known innovators.

2. People listen to music all the time on the Radio, Ipods etc.

3. Bono is particularly known for charity work too.

Each of those have a way to reach people who don’t yet understand why we are all harping on about virtual worlds. Love or hate U2 it acts as personal conversation rather than a business one.

What happens at the concerts (as you can see from the video) is effectively a tribute act puppet show/impersonation. Yet it also has a crowd of people willing to watch listen and feel part of it. They are all passionate U2 fans, and they have gathered together to raise some real money for charity by collectively listening to their favourite tunes together.

Underlying all that we of course end up with copyright and image rights conversations, but in the case of U2inSL this really is a passionate fan base enjoying feel connected rather than someone making fake gucci bags for a quick buck.

As many of us keep pointing out, engaging and supporting these passionate fans whether you are a band, an author, a manufacturer of widgets is the key to the world today. Where those people happen to be and the way they are choosing to interact with one another is where you need to be joining in. Not a huge capital investment, not masses of PR and spin but just good old fashioned human to human conversation. Twitter, SL, Facebook…. whatever comes next, you just have to be there.

Predator design on Feeding Edge car

Further to my previous post with some Feeding Edge logos splattered over a Subaru, I just used what little graphic design talent I have to create this multi layered rendition of a predator mask. So I now have both a version of the corporate presence and my own merged together on a GT500.

I think it looks pretty good, but then beauty is in the eye of the beholder.

Update: Using the subaru scoop as part of the predator mask 🙂

More product placement – Feeding Edge Forza3

Ok so it will not win any prizes for design (yet) but here is my custom Scooby in superb Forza 3.

This user created content means that people I race with online with this car get a Feeding Edge advert for free.

How are you promoting your brand across social networks like Xbox Live?

Thankyou to the audience at Smarter Technology

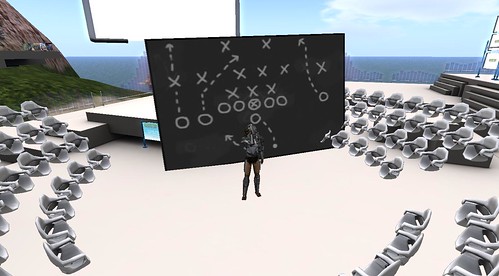

On tuesday night I was invited to pop along in Second Life to a Smarter Technology (sponsored by IBM ironically). Jon and Rissa kindly asked me to show and tell on the various elements of presenting in Second Life that I stumbled upon when preparing for the conference in Derry earlier in the year.

I basically had a pop at all the single screen powerpoints that we end up doing in world, just replicating what we do in offices. The space and dynamic nature of virtual worlds means we can do so much more. Part of what I do is wear the presentation elements, this is of course a hack around not being able to rez, but it works and has some nice side effects.

I used most of the flavours of the pitch that I wrote about here and yes we did get onto 3d printing. So whilst the presentation was about presentation styles I also tried to inject elements of the content in aswell so it was not overly meta.

I got Jon to set up my first page on the big screen, then also to break the build with a long wide collection of slide similar to the ones that can be seen on IQ which is a presentation trick people use to show the entire pitch and move along it (which is well on the way to breaking the PPT metaphor)

I had also built some examples of the quest for 2d whiteboarding (which we still need) but how that can be done in a different way in these environments, we have dynamic creation, we have 3d immersion why stick to 2d?

(These shots were from when I popped along to check the space out so I did not grief people too much)

Still the favourite was the giant hands though I think.

Photo by Ishkahbibel

So a huge thankyou to all who tuned in or attended. The discussions and questions we great. It was mixed mode in that text flew past and I answered mostly by voice, something that still takes some getting used to. However the sheer amount of text activity meant I knew everyone was there 🙂

I know right at the end we descended into Mac/Iphone/Xbox/Windows 7 discussions but its kind of like the parrot sketch for Monty Python its part of a gathering to discuss such things.

If anybody missed an answer to a question they asked, or I missed the question altogether in the live flow then please feel free to ping me inworld, or comment here or twitter or….. I am not hard to find 🙂

IQ and Hursley are still there in world, there are a few plots left to rent on Hursley and the half a sim on IQ is also available though it has a furrie colony borrowing it at the moment let me know if you need some SL space.

Evolver Avatar on the Iphone via Unity3d

It has been a busy week for travel and meetings this week. However in the gaps between pieces of work I have been exploring what I can do quickly and simply with the Iphone 3GS. I had been looking at the various 3d toolkits, doing model import by hand.

In the end it struck me as obvious to use unity3d iphone as this just takes away the underlying hassle.

Using unity3d it was very easy to bring things in such as my Evolver 3d model into an app and make it draggable and rotatable using touch. This took approximately 10 minutes to do. I spent more time wondering about the various particle effect parameters than anything else.

Obviously you can do a whole lot more, but it is a massive leap in productivity. It does not require any other strange contortions or going via windows in order to get a good 3d model in.

There are a few quirks in the tool chain but things like the Unity Remote application that lets you see whats going on via your Mac and streaming media from the iphone are great. (Hint if you cant get unity remote to compile and deploy just grab a version from the iphone store (lost a few minutes thinking about that!)

I know the hardcore techs will be saying you can build all this from the various bits, cocos, SIO2 etc but I have had to do that one to many times and I want to explore the ideas for applications rather than the guts of the platform.

Just having this in my hands and using it has created rather a long list of “I wonder if…” apps to create. Including using the touch interface to control some Opensim objects.

Sculpty fingers – Crossing worlds – Moving data

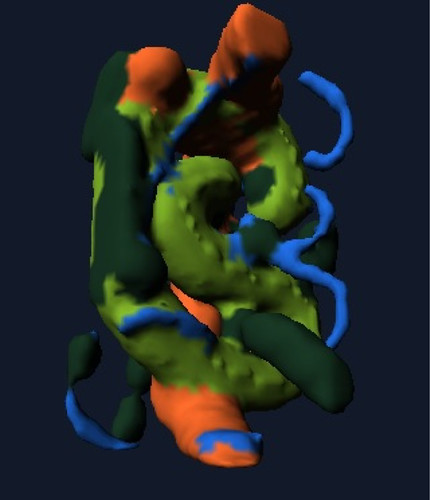

I just bumped into the excellent iphone application called Sculptmaster 3d by Volutopia it lets you build and manipulate a 3d model using touch and some simple tools. You can then take that model and export it as an OBJ file via email or just snapshot it.

It produces very organic results, it feels like modelling with clay in many respects. The key for me is that is is using a different interface, in this case the iphone. This makes it very accessible and available to people who want to just have a quick go.

Given what I was up to yesterday I had to try and export it and upload it to 3dvia, which of course works fine.

Once it is there in 3dvia it is shareable via the 3dvia mobile app, just search for handmade by touch as the model name.

Again I know this is not live augmented reality but I added the sculpture to my garden.

That geotagged photo is then actually appearing in the live Flickr Layer in Layar so its another wheel within a wheel.

This is interoperability. Data and presence of that data is able to flow, people allowing import and export of user generated content, either live, or in more old fashioned batch means that we can combine applications and try ideas out instantly.

This sculpture could of course now be manufactured and created on a 3d printer, the data pattern could be sold, licenced adapted, dropped into other virtual environments. The loop is complete.

Augmenting Augmented Reality

Whilst having a god with the 3dvia iphone application I decided to see if I could use my Evolver model and upload it to 3dVia, and get myself composited into the real world. The answer is of course you can its just a 3ds model! However I then wondered what would start to happen if we started to augment augmented reality, even in the light sense. I had done some of this with the ARTag markers way back on eightbar. and also here putting the markers into the virtual world and then viewing the virtual world through the magic window of AR.

That of course was a lot of messing about. This however, was simple, handheld and took only a few seconds to augment the augmentation.

Or augment my view of Second Life.

So these are the sort of live 3d models we are likely to be able to move in realtime with the 3d elements in Layar very soon.

Likewise we should be able to use our existing virtual worlds, as much as the physical world to define points of interest.

I would like to see (and I am working on this a bit) an AR app that lets me see proxies of opensim objects in relative positions projected in an AR iphone app that lets be tag up a place or a mirror world building with virtual objects. Likewise I want to be able to move the AR objects from my physical view and update their positions in opensim.

All the bits are there, just nearly linked up 🙂

We know it works taking real world input and injecting that in a virtual world (that was Wimbledon et al.) or marker based approaches such as I did over a year ago with my jedi mind numbers

I am thinking that between things like the Second Life media API’s or direct Opensim elements knowing what is going on in the world, with a combination of sharing services for models like 3Dvia, and wizards like Evolver all jammed into smartphones like the iphone we have a great opportunity.