It has been a busy week for travel and meetings this week. However in the gaps between pieces of work I have been exploring what I can do quickly and simply with the Iphone 3GS. I had been looking at the various 3d toolkits, doing model import by hand.

In the end it struck me as obvious to use unity3d iphone as this just takes away the underlying hassle.

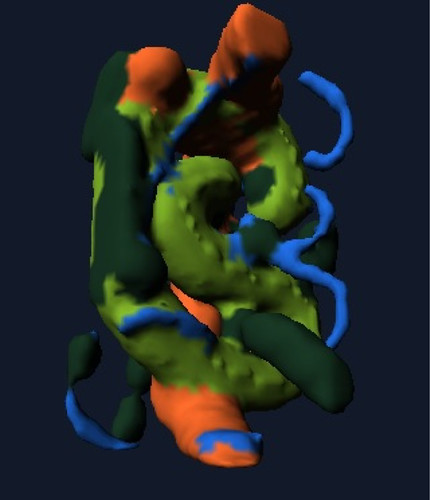

Using unity3d it was very easy to bring things in such as my Evolver 3d model into an app and make it draggable and rotatable using touch. This took approximately 10 minutes to do. I spent more time wondering about the various particle effect parameters than anything else.

Obviously you can do a whole lot more, but it is a massive leap in productivity. It does not require any other strange contortions or going via windows in order to get a good 3d model in.

There are a few quirks in the tool chain but things like the Unity Remote application that lets you see whats going on via your Mac and streaming media from the iphone are great. (Hint if you cant get unity remote to compile and deploy just grab a version from the iphone store (lost a few minutes thinking about that!)

I know the hardcore techs will be saying you can build all this from the various bits, cocos, SIO2 etc but I have had to do that one to many times and I want to explore the ideas for applications rather than the guts of the platform.

Just having this in my hands and using it has created rather a long list of “I wonder if…” apps to create. Including using the touch interface to control some Opensim objects.

iphone

Sculpty fingers – Crossing worlds – Moving data

I just bumped into the excellent iphone application called Sculptmaster 3d by Volutopia it lets you build and manipulate a 3d model using touch and some simple tools. You can then take that model and export it as an OBJ file via email or just snapshot it.

It produces very organic results, it feels like modelling with clay in many respects. The key for me is that is is using a different interface, in this case the iphone. This makes it very accessible and available to people who want to just have a quick go.

Given what I was up to yesterday I had to try and export it and upload it to 3dvia, which of course works fine.

Once it is there in 3dvia it is shareable via the 3dvia mobile app, just search for handmade by touch as the model name.

Again I know this is not live augmented reality but I added the sculpture to my garden.

That geotagged photo is then actually appearing in the live Flickr Layer in Layar so its another wheel within a wheel.

This is interoperability. Data and presence of that data is able to flow, people allowing import and export of user generated content, either live, or in more old fashioned batch means that we can combine applications and try ideas out instantly.

This sculpture could of course now be manufactured and created on a 3d printer, the data pattern could be sold, licenced adapted, dropped into other virtual environments. The loop is complete.

Augmenting Augmented Reality

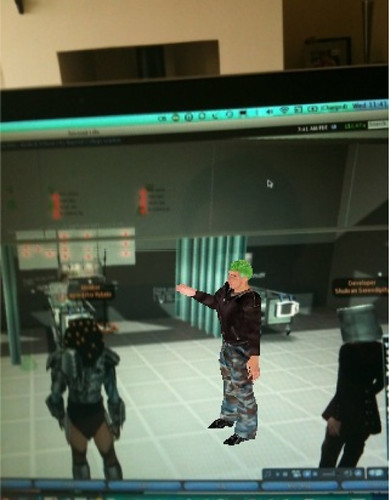

Whilst having a god with the 3dvia iphone application I decided to see if I could use my Evolver model and upload it to 3dVia, and get myself composited into the real world. The answer is of course you can its just a 3ds model! However I then wondered what would start to happen if we started to augment augmented reality, even in the light sense. I had done some of this with the ARTag markers way back on eightbar. and also here putting the markers into the virtual world and then viewing the virtual world through the magic window of AR.

That of course was a lot of messing about. This however, was simple, handheld and took only a few seconds to augment the augmentation.

Or augment my view of Second Life.

So these are the sort of live 3d models we are likely to be able to move in realtime with the 3d elements in Layar very soon.

Likewise we should be able to use our existing virtual worlds, as much as the physical world to define points of interest.

I would like to see (and I am working on this a bit) an AR app that lets me see proxies of opensim objects in relative positions projected in an AR iphone app that lets be tag up a place or a mirror world building with virtual objects. Likewise I want to be able to move the AR objects from my physical view and update their positions in opensim.

All the bits are there, just nearly linked up 🙂

We know it works taking real world input and injecting that in a virtual world (that was Wimbledon et al.) or marker based approaches such as I did over a year ago with my jedi mind numbers

I am thinking that between things like the Second Life media API’s or direct Opensim elements knowing what is going on in the world, with a combination of sharing services for models like 3Dvia, and wizards like Evolver all jammed into smartphones like the iphone we have a great opportunity.

Bionic Eye – Augmented Reality on the Iphone (officially)

@kohdspace just tweeted this piece of news from Mobile Crunch.

After what amounted to some terms of service violation hacks where people built AR applications for the iPhone it seems that this Bionic Eye is an official AppStore approved version.

I don’t have an iPhone (yet) despite being a registered iPhone developer, but if the api’s are released and working I expect a huge flood of AR applications, and I suspect there are some very clever ideas yet to be implemented.

I want to be able to create my relationship between the data and the real world using a mirror world or control deck in 3d in somewhere like Second Life and then be able to effectively push new AR overlays and contexts out to people.

I think this will feel a little like the renamed Operation Turtle now called Hark but for AR. In fact Hark is a handy name isn’t it as it already has AR letters in it. There I go thinking out loud and inventing things.

I would love to hear if anyone has this bionic eye app already, or if anyone is working on a mashup with the virtual worlds we already have rather than just GPS overlays.

This is what a mobile device is for. AR

In the quest for mobile communication the telecoms companies have tended to stick with the same principles. We need a mobile phone to carry voice, we need a camera to take pictures so that we can have a video conversation (that has not quite seemed to take off). Text messages with SMS was a bit of a suprise and point to point MMS is not quite what people wanted. The rise of the social web, communicating in plain site online has more than ever driven the take up of data plans. Far more than downloading movies, or on the move TV it would seem.

Augmented reality is in a gap though. When you take the cameras which accidently evolved to show the world not show the user, you take the processing power onboard and the recent huge rise in combined device capabilities we start to see yet more Augmented Reality.

This is a great IPhone example from some guys at Oxford in the UK.

It is impressive at it is in effect markerless using the environment to figure registration points.

The new Iphone 3GS with its compass and even more ways to tell which way is up lends itself also to this sort of game control mechanism. Which is not Augmented Reality as such, though is a branch of the field. Here the real world is used as the control mechanism for the virtual world, and it does not matter where in the real world you are, but the virtual world is always “inside” on the device.