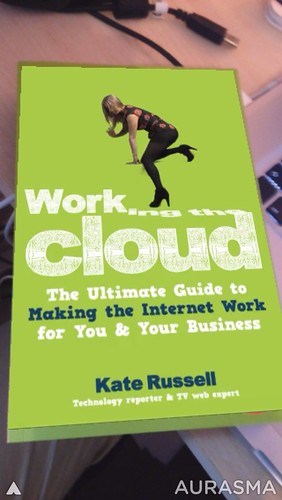

I was pleased to get a chance to head to London last Friday for the launch of Kate Russell’s new book Working the Cloud. Amazingly this is Kate’s first book which given how often she has provided interesting tech news on the TV on shows like BBC Click is pretty amazing. Mind you, I have not written a book yet either 😉

So yes I was at the launch party along with other media people and fellow tweeters, but I wouldn’t write about something unless I thought it had something going for it. This book and its supporting material certainly does.

So what is the book all about ? Well it is a fantastic curated collection of useful tools to help anyone who has not yet fully realised the power of the web in getting things done. Starting a business, communicating with customers, creating interesting content etc. it is all covered.

It is not merely a collection of URL’s as each is explained as to the benefits of a particular tool or web application. Each section also has more than one alternative and is also peppered with tips. This is because Kate is writing about things she has used, is using and in some cases maybe stopped using for a better alternative.

This is really an expression of Maker culture, but for the more apparent mainstream ability to just get on with things. Too often people want to read lots of instructions for each application, making decisions as if there was not way to change afterwards. I think the book points to a spirit of doing. There are a stack of tools out there, they are accessible, often free or very cheap and there is no excuse for not using them.

To prove the point that there is more than one way to uv unwrap a feline polygon model Kate has done more than just publish a book. There is the ongoing companion website http://workingthecloud.biz/ and apps for mobile devices too.

The cover of the book itself is an augmented reality trigger for Aurasma’s app too. Something worth having a look (and listen to) if you see a physical copy of the book in a shop. The intro (without sound on) can raise a few eyebrows someone is looking over your shoulder 😉

Kate also did a virtual book signing. Oddly I missed this as I was in a Choi Kwang Do class at the time. However it was a transmedia gig using Google Hangout

There were some tweets with @andypiper about the other first virtual book launch back in 2006 in Second Life. Which I remember very well 🙂

This is of course a different sort of event 🙂 So we are not going to take Kate’s first away from her 😉 Though the next edition of the book must have some 3d virtual world communication in it. Who knows maybe I can guest write that 🙂

I am looking forward to Kate’s next book which she crowdsourced the funding for on Kickstarter to write some science fiction for the new Elite Game which again proves that she is doing all the things in the book not just writing about them.