For many years I have seen the CES show appear in magazines, then TV and then of course all over social media. As a long time tech geek and early adopter I have always wanted to attend, but never been able to. In my corporate days getting approval for a train to London was a chore. As a startup I never had the time or money either, preferring to invest in the gadgets like the Oculus rift or paying for a Unity license so I could build things. On the TV show we talked about CES, and if we had gone to a 4th series it was on the cards.

This year, with my industry analyst role in IoT I was able to go. Of course as a work trip it was a bit different to just being able to take the show in.

I had briefing after briefing with a bit of travel time in between for the 2 main days I was there. Once thing that is not always obvious is just how big the show is. Firstly there is the Vegas convention centre with North and South Halls that is bigger than most airports. It was so big that I only got to really visit the south halls, the north hall of cars, a motor show in its own right alluded me. All the first days meeting were around the south halls. Day 2 was at the other areas of the show, the hotels have their own convention centres and also floors and suites get rented out. The Venetian Sands, Bellagio and Aria all had lots going on, each as big as any UK show it seemed.

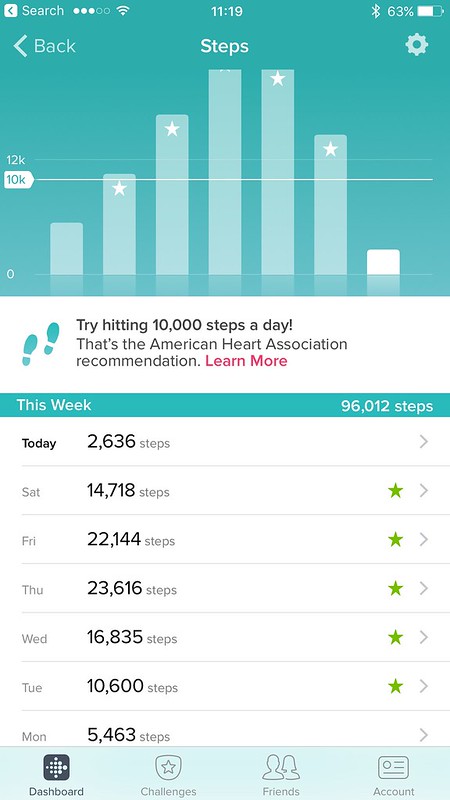

Walking around 9-10 miles a day, still not seeing everything, at a trade show gives you an indication of the size.

Again I pretty much missed most of the expo floor with meetings but the day I felt out I had an hour to pop back to the Sands main hall and see some things.

The split across the entire show of giant corporate powerhouses to tiny startups with a single table was amazing. I had assumed it was all the former, but the latter is heavily supported and with kickstarters and maker culture now mainstream it will continue to be really important.

One thing I was there to see was how much Augmented Reality was taking off running parallel with the VR wave, there were a lot of glasses and of course the Hololens and the industrial focussed Daqri smart helmet. Still not there as a consumer focus really yet, though the Asus Zenfone AR powered by Tango and Qualcomm was announced but not on sale until later in the year (no date given) which may put true AR into people’s hands.

HoloLens

DAQRI Smart Helmet

It was CES’s 50th anniversary, and that was fitting given I turn 50 this year too. It may not be the most exiting bucket list tick but I have already done lots of mine, and need to refresh the list anyway. I am not sure that will include riding in this human size quad copter that you fly with a smart phone though!

I guess we best experience everything before these guys and their bretheren take over.

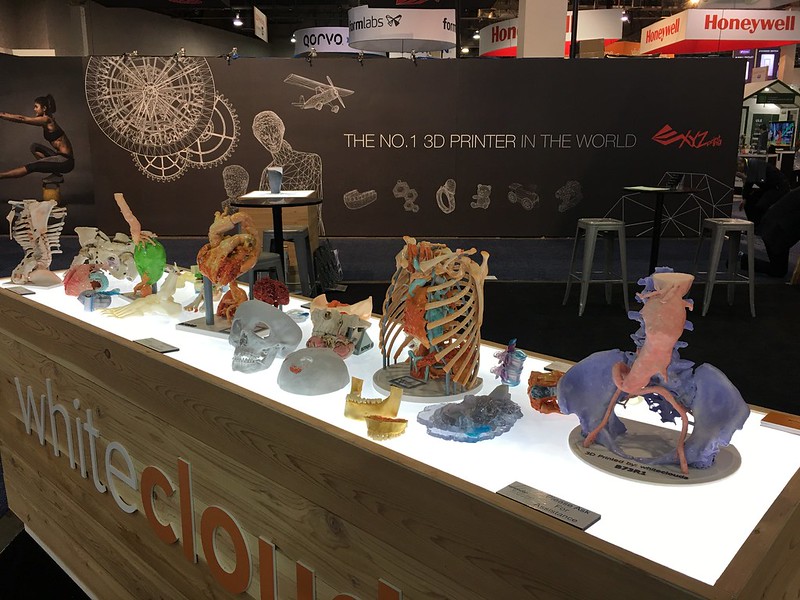

Still at least we can 3d print new parts for ourselves

As you will see in this album the whole place just becomes a blur of everything looking the same, lights, sound, people, attract loops etc. All very fitting to be in Vegas.

@xianrenaud and I wrote a spotlight piece for 451 Research as a show roundup which may end up outside the paywall CES 2017: connected, autonomous and virtual in case you do have access.

So that made a whirlwind start to this year. This time last year I was published Cont3xt and wondering what the next steps were going to be. This year I have stacks of IoT research and writing work to get on with, a 50th birthday to not get worried about, imminent wisdom tooth removal (yuk) and all being well a 2nd Degree/Il Dan black belt text in Choi Kwang Do. So onwards and upwards. Pil Seung!