It always amazes me how there is always something to learn or be surprised about tech, you think you have a handle on it and then you see or somehow sense something else. I recently got hit with a thought about the relative sizes of an avatar in a shared virtual space/metaverse application and what that can be used for. I have often talked about the scale of virtual worlds being a mutable thing, we can be sub atomic or at the scale of a universe in a virtual environment. I have also talked about being the room or place, i.e. a sort of dungeon master approach controlling the room (as a version of an avatar) whilst others experience something about that place.

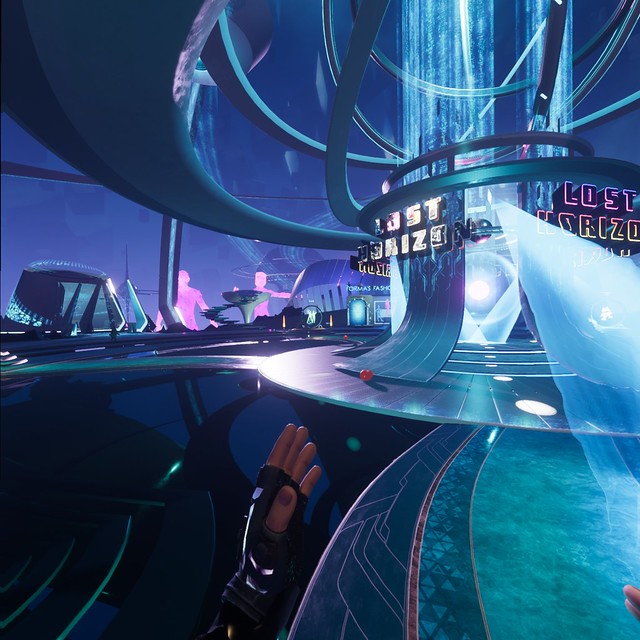

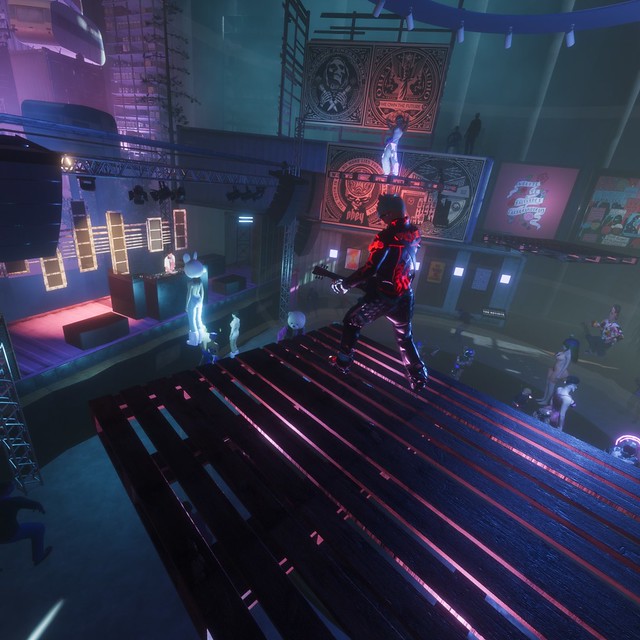

The first experience that got me thinking about relative avatar size was getting to play Beat Saber as a 3 player VR game. I had done 2 player, here you see your fellow player off to the side facing you but experiencing the same song and blocks. You are so busy playing and concentrating, but it is a good experience to be shared. However once over 2 people, at 3 in this case, you are all on your own track but facing a common central point, like being on a star. The player that is doing best has their avatar zoomed and projected up towards that central point as a reminder you need to work harder. Its a great mechanic and is then using the avatar size as a communication mechanism, i.e. they are better at Beat Saber than you.

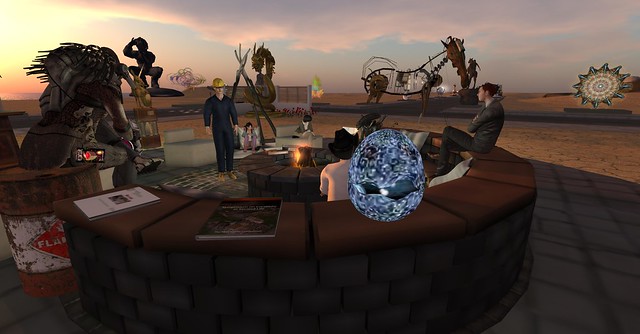

The next experience was to properly play the VR dungeon crawling turn based dice and card Demeo as a multiplayer. This game has you and your friends gathered around a board decked with figurines. You are represented by you hands, to pick up the pieces and moves them, or to roll the dice, and by a set of glasses or small mask. The avatar is not a whole thing, no need for legs etc. The board is the thing you all want to see. Each player can change not only the direction of the board just for them, by dragging it around but also zoom in and out to see the lay of the lay or get right in and look at how the characters have been digitally painted. The game is collaborative and turn based, and you get to see the other players had and mask avatars. Here though is the twist, you also get a sense of whether the other person is stood looking around the table top or if they have zoomed close because the avatar you see of them scales up and down in size according to their view of the world. Not only can you see the direction they are looking but how detailed their view might be. If you are zoomed in and you look up you see the giant avatar of your fellow player looking at you. This is all very fluid, gameplay is not messed up by it, and it shows a level of body language that only really exists in metaverse style applications. The VR element makes it feel even better but the effect works on 2d screens too.

We often talk about sense of presence, remember you were next to someone in a virtual place, but this is another dynamic that is obvious, but only obvious now having experienced it. Anyway, that’s the thing I learned earlier this week. Also in order to find a picture to express the image above started as one DALL-E sentence for the AI generation, but I also tinkered around with the new web editing that lets you add new frames of generation to existing images, creating AI generated composites!. The stately home was an accident, but somehow looks very much like my old 2006 metaverse evangelist physical and virtual home of IBM Hursley. The ability to create an image and a feeling for this post, not just grab a screenshot for the games (which is always tricky to get what you want anyway when it involves other players), is also rather cool and is the first time I have created an image for a subject, rather than the subject be image generation. The future is arriving fast!

Anyway, avatars, not always what you think they are are they? 🙂