As I was on the roster as the rather excellent Goto conference I was asked by Ben Linders a few questions on virtual environments and where they fit in the world of software engineering. This turned into a slightly longer interview and has just gone live. It is always interesting to frame the answers about virtual worlds into a slightly different context or industry but of course when it comes to the software industry, the fact that this is software anyway has a nice meta element to the conversation.

The article is live here.

Along with my super g33k bio picture 🙂

It is also cool that you can now just search “epredator” on infoq and tadah! 🙂

I guess this may seem more of me going on about the same things, but there is a reason for that. These technologies make a huge difference to a lot of use cases. I am getting more calls and questions again about how these may work (post bubble). Ignoring the possibilities for anyone or any industry could be costly this time around. I just want to help 🙂

games

Rocksmith 2014 Session mode

I am a big fan of Rocksmith, it has been a breath of fresh air in ways to learn and enjoy guitar now they are ramping up for the next edition of it. Luckily all the downloaded songs are still transferable. (Well less luck and more a necessity!)

I often use it as an example in my talks like the one I am giving this Wednesday at BCS Shropshire.

An interesting new feature has been added though, and so my talk has to evolve to keep up. That of session mode with a reactive and generative music backing band. You play and they keep up. This is really interesting and I can’t wait to see how it pans out with my guitar tinkering. The E3 conference saw Jerry Cantrell of Alice in Chains take to the stage. Just listen how, (once he has managed to go through a few customisation options using voice) the band kicks in and responds to his playing.

🙂

Not just handbrake turns – GTA V

The original Grand Theft Auto was a great game. A top down scroller with cars that had very pleasing handbrake slides as you zoomed around the city in a sort of glorified PacMan variant. That sells it a bit short but as the creators called it that once I think I can :).

There is a complete history here on Games Radar.

I loved the top down scrolling car action not least because I had written a few demos along that sort line.Not least the cars game that was part of the developer kit with the old firms “BIG” proof of concept for in game micro transactions back in 2004 (yes they should have stuck with it but what can you do !)

So when the 3d versions of GTA started to produce more variety, but definitely keep that level of fancy driving it was fantastic.

I always have marvelled at the size of the free roaming worlds in many of the games. They just get bigger and bigger. They are not random either they are designed, intricately designed! The entire metaverse now though becomes a backdrop for narrative, not just sliding around. The good thing is though, you can ignore the story and just have some fun razzing around in cars listening to music.

If you are not a gamer and you have not watched this new video of Grand Theft Auto V the fifth installment and the latest and greatest I urge you to give it a look. Just to get a feel for the scale of these games. I am sure GTA V will not disappoint, they have just got better and better, more and more varied. They are a fantastic achievement in games and technology too.

Adventures with Photon and Unity3d

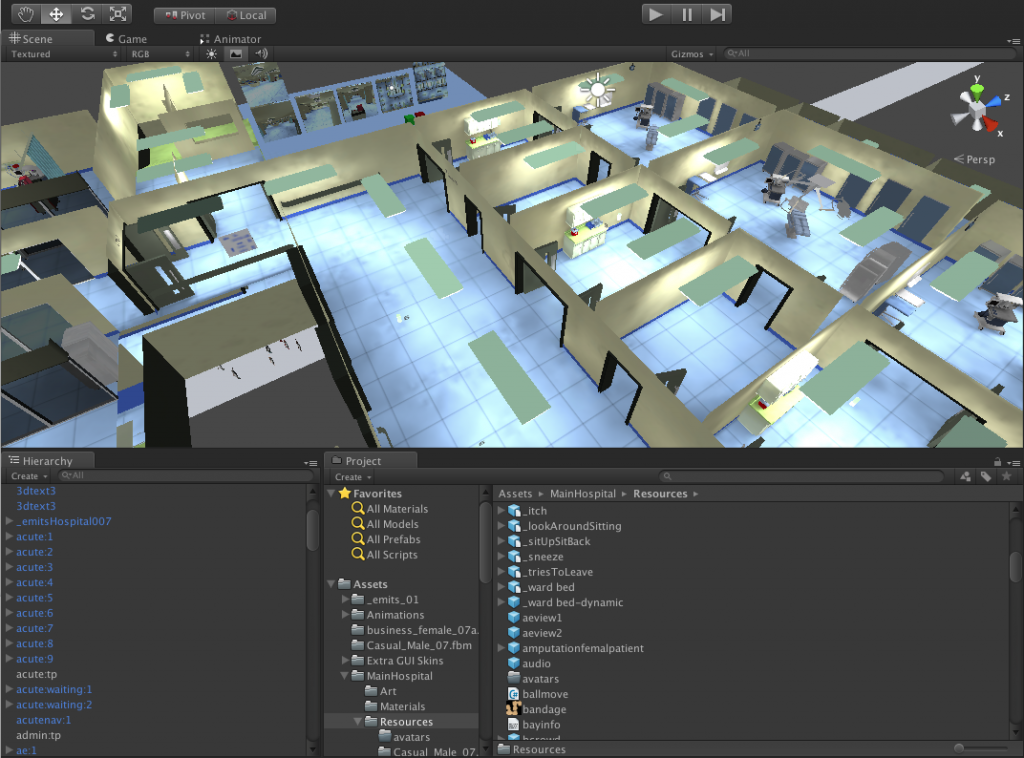

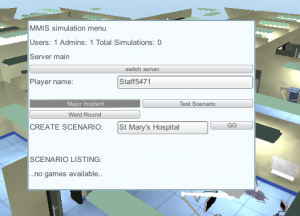

The unity3d hospital I have been working on has, up to now, been running on the Photon Cloud. (Photon server from Exit games is a socket server that allows client applications like those in Unity3d to talk to one another. They run a simple to use hosted version called Photon Cloud which is great for testing things out.

I decided though that some of the traffic we were pushing through might break the tiers for hosting on the cloud so thought I would run my own server. It was not the concurrent user as we have a few users, but they do a lot. Rather than a lot of users doing a little which is the general profile for gaming.

In part that is because on of the unity clients acts as the master for the application. It holds a lot of simulation data and changes to that have to be communicated (in various cached ways). If I had built it as server logic we may have cut down on traffic but would have to stick to a single way of working. As it is the application is also designed to fall back to disconnected mode and can be run as a non network demo (though that has its own challenges).

I did have a few difficulties to start off with but many of those were actually very simple to solve, and if you read some of the annotations in the docs they all make sense.

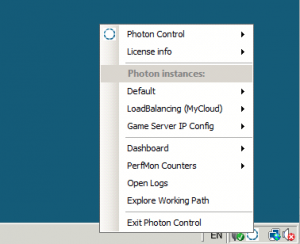

I sparked up a rackspace windows 2008r2 server first. Photon is windows based. I had dabbled with the Azure cloud hosting version for it but much of that required a windows development environment to deploy to and I am a Mac user with occasional windows use 🙂 So it was much simpler to have a rackspace server and use the remote desktop to attach to it.

Downloading the files via the remote desktop was a problem to start off with due to all the various firewall restrictions, so there was a bit of clicking around windows admin.

I followed the 5 minute setup (kind of). Once downloaded you just end up with a set of bin directories for the Photon control and directories with the various server applications and configurations.

All I needed was a lobby host and then simple game rooms to hold broker the data flow and RPC calls. I had not extra server logic. If something moves in unity3d on one client it needs to move on the other.

I didn’t have much luck having asked photon to start the Default application. I was not getting connected so I added a few extra firewall rules just to be on the safe side. I was starting to wonder if I could get to the hosted machine at all but I think there were some other network problems conspiring to confuse me too.

Then I read that if you are switching from Photon Cloud to your own server you should use the other application configuration, cunningly named Loadbalancing(MyCloud). I switched to that and ran the test client on the actual server and things seemed better. Still no luck connecting from unity3d though. Then I looked at the menu option that said Game Server IP config. It was set to a local address, so obviously the server was not going to be letting itself be known to the outside world. A simple click to autodetect public IP and I was able to connect from unity3d.

It all seemed good until after a few connects and disconnects it started to throw all sorts of errors.

I had to ask on the forum and on twitter, but just asking the question I started to think what I was actually doing and what I was running. I was glad of the response from Exit Games though as it meant that I was going along the right path.

Again it is obvious but… The mycloud application config that I was using had 1 master server and 2 game servers it did say it was not for production, but as this is not a massive scale game I thought I wouldn’t touch any configs. It looked like the master server was getting to a point of asking each game server who was the least busy (to direct traffic to) and getting an answer that they were both maxed out. I initially thought I needed to add more game servers, but it was actually the opposite. Removing one of the game servers from the config (effectively removing and load balancing logic) meant the same game server got the connections. The loadbalancer is really there for other machines to be brought into mix.

Having thought that was what was happening I cut the config files but still found after 30 mins running I got conflict. I did say that in the forum post too. However I had not fully rebooted the windows box only restarted the photon server. I think there is a lot of shared memory and low level resources in play. A few reboots and restarts and things seem to be behaving themselves.

The test will be today when the scenario is run with several groups of 5 users, but all running voice too.

I have put some server fallback code in though to allow us to switch back to the Photon Cloud if my server fails. For a while I was publishing two version one for cloud and one for my server. That was getting impractical as each upload on my non infinity broadband was taking 45 mins. So any changes had a 90+ minute roundtrip not including the fix.

GOTO Amsterdam 2013 conference

Today I am heading off to the #gotoams conference in Amsterdam. I am really looking forward to this one. I have a whole day to attend tomorrow before giving my blended reality talk on wednesday and also a lighting talk on MMIS.

There is lots more info on the website, and if you are going come and say hi.

I am looking forward to the track “RISE OF EDUCATIONAL TECHNOLOGY STARTUPS” too as that crosses over with what I talk about and work in and with the recent IGBL in Dublin

I have not been to Amsterdam since the 2011 Metameets, which I have some great memories of too being with so many like minded metaverse people at the time

It does mean I will miss a few Choi lessons this week, but as I am talking about Choi (in part) and will no doubt wake up early in the hotel I am sure I will get some practice in for my grading next week 🙂

IGBL – Learning from Games Based Learning

Last week I popped over to Dublin for the Irish Symposium on Games Based Learning at the Dublin Institute of technology. It is a few years since I was there last at Metameets listening and talking virtual worlds.

There is a very influential core of virtual world people there and many of them were at the conference so that is alway good to have a real world/SL meetup.

Dr Pauline Rooney from DIT opened up the conference and it felt like I was in the right place.

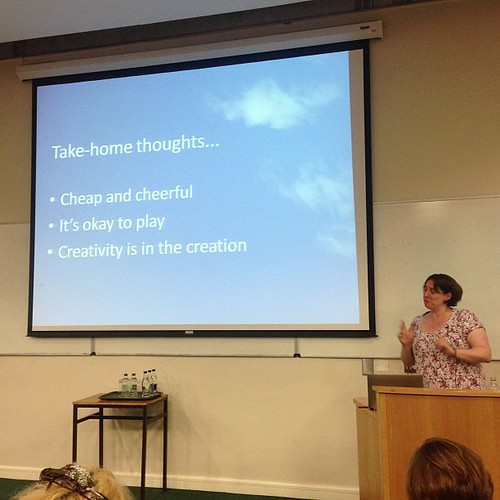

The opening keynote was by Dr Nicola Whitton from Manchester Metropoliton University. “Game Based Learning in an Age of Austerity”. I then knew I was in the right place because everything Dr Whitton said resonated with some many projects. The key theme was that you don’t have to spend millions of pounds making games for learning, they also don’t all have to be technical deliveries of games. Making a game out of making a game can be as beneficial as putting people through the end product.

She showed an example of the sort of thing that happens in medical sims and games. Lots of huge expensive graphics and realism with a text multiple choice question on the top. Having had to build some things like that, to meet requirements, but having also subverted that with simpler ideas and gaming concepts I was sat there nodding in agreement.

The other point as you can see in the take home points above was that it is ok to play. Humans learn through play and it still gets regarded as not serious enough. Tales of people expecting to put away childish things and then how restrictive their thinking gets when faced with exploring and creating in a playful way.

After a quick coffee I chose to stay in the same room for the next track.

Neil Peirce from Learnovate Centre Trinity College Dublin presented his academic paper “Game Based Learning for Early Childhood“. I did not get so much form this, though I am sure the research was very as really I needed to read the paper. However, this was an academic conference and I am not technically an academic so it was not aimed at me 🙂

I was more interested in “An investigation into utilising a Theraputic Exergame to improve the Rehabilitation Process” where the Waterford Institute of Technology had worked with physiotherapist and used a Kinect, Unity and Zigfu to explore repetition of exercise in the home. This appeared successful, and fitted well with my experience of kinect and how we can broadcast and work together physically using these devices.

Next up was a great Prezi presentation by S. Cogan from the National College of Ireland. He was explaining “engagement through ramification”. Whilst the word gamification is now tired and over used he presented it from a point of view of not knowing what it was, how he was going to use it and shared waht worked and didn’t work in getting the student he lectured to be bothered about class. The positive results were that he had got much better attendance and pass marks, but also that he himself felt a greater sense of achievement making some of the activities more game like. It was very inspirational to hear a younger lecturer looking to change things around a bit, but not completely overturn the system. It all just made sense, and sharing what failed (wrong rewards, too much game in some things etc.) was very refreshing.

After lunch it was 2 hour workshop time so I popped along to “Developing games for Learning using Kinect”. Now I was not sure what to expect, I thought I might be able to help out as well as have a bit of fun myself. I had not fully realized that it was Stephen Howell who is the creator of the very cool Kinect for Scratch. It was great to hear @saorog explain scratch to the audience of mostly non techy people and get them programming in the same way its done with kids, then hit everyone with the simplicity of using the kinect in scratch. It was very well done and very well delivered, he is a clever bloke 🙂 . He also showed us his Leap motion controller in action 🙂

After a bit of Irish culture on the evening.

Followed by almost no sleep as my hotel room had no double glazing so the ongoing partying in Camden Street and the subsequent bottle deliveries pretty much filled all the early morning….

Elfeay and I did out little pitch. “I am a Gamer. Not because I don’t have a life, but because I choose to have many”. We each did a 8 minute chat on how we have found our various tribes, how games and games technology and culture have led us to places that then feed into more games and games culture. My example being the journey in Choi Kwang Do via games and tech, plus a few other things thrown in 🙂 It provoked some good workshop style discussion too. It was also great fun to do in that format and huge thanks to Elfeay for getting it all on the roster 🙂

It also dovetailed nicely into some Pecha Kucha sessions where slides are timed and there are a given number in a given time slot. It is almost the presentation equivalent of a vine or a tweet. Concise, well planned to fit and delivers a lot in a small package.

Dudley Turner from University of Akron in the states did a great piece of “developing a quest-based game for university student services”. Making the discovery of what is available to new students on campus through a mix of alternate reality pieces of information like emails and mini websites linking a narrative to real world tresure hunting using Aurasma AR location specific tags to get them to places. It is all part of a course that they have to take anyway, but this gets them out and about and engaged with the places they need to be at, not just reading and looking at maps.

The next was P.Locker presenting “The snakes and ladders of playing at design:Reflections of a museum interactive designer, game inventor and exhibition design educator”. This was refreshing as it was not really about the tech end of things, but the core of play and interaction. Physical installations and how being a board game designer helped create museum pieces and teach others how to make them. There are elements of practicality and robustness in the physical aspects to consider as well as the learning aspects.

Finally, last but by no means least, S. Comley the University of Falmouth talked about “Games based learning in the Creative Arts”. It was surprising to hear that arts were not heavily involved in the use of games in the learning process. It seems human nature to stick with what you know hits everywhere. It will be interesting to see where her research takes her, as this was a precursor to a much bigger piece of work, stating the problem and the potential benefits. In particular to get cross discipline interaction in academic arts.

That was it for the main tracks. I missed a lot as lots was on at the same time but it was all brilliant.

It ended with a keynote from Fiachra O Comhrai from Gordon Games. “Using game science to engage employees and customers in the learning, knowledge sharing and innovation process”. What was interesting here was that Gordon Games was formed by non gamers. Mr Comhrai said he formed the company then decide to look at some games. I felt slightly uneasy at that, but good on them for doing it anyway. As a long time gamer where I both play and analyze what is going on it felt that my 40 years of gaming experiences was being summed up as something that you pick up in weekend on xbox. I am not sure that is what he meant. Also much of their work is in call centres, though we did not get to see many examples of Gordon Games, we did get to see some fun videos from other people.

After that some of us Second Lifers headed off for lunch before I dived into a taxi and headed home.

Here we all are looking very shifty 🙂

@elfeay @acuppatae @inishy @d_dreamscape @iClaudiad

So thankyou Dublin and thankyou everyone at the conference it was a blast and very inspiring to be with so many clever and passionate people.

Xbox One – not all bad

I missed watching the Xbox One live reveal video last night. Generally these overly rehearsed American corporate tele prompter fed things are pretty long, drawn out and less than optimal experiences. So I went to Choi instead obviously!

I came back to see what the generally vibe was and it seemed quite negative. Partly because of a focus on TV and web integration not on games, and where it was about games it was Call of Duty and a new dog character. However having watched the replay there were some gems of information in there that I found interesting.

The first was “The Kinect understands the rotation of your wrists and shoulders and can even read your heartbeat.” It seems the new Kinect 2.0 is a much more advanced device. A wide angle field of view and a HD image of the world. Whilst the focus seemed to be “look how good skype video calls will be” there were some images of the new kinect skeleton and tracking. It looks fast, assuming it was live. It also showed some fast combat moves as well, combined with some subtle yoga moves. All looking great if we can get at the tech for things like Choi.

**Update added the wired preview of all the kinect abilities 🙂

The multiple references to cloud were generally about storage, as is often the case. However there was a reference to the processing in the cloud that can occur. i.e. servers. “bigger matches with more players, living and persistent worlds”. So we have the chance for some real persistent virtual worlds and games to exist on the console in a well managed way. These exist generally in PC land with Second Life, Eve-online and World of Warcraft. There have been the odd foray into proper MMO territory on consoles, such as the new Defiance tie in, and playstation Home. Now though it looks like this, assuming the games companies can adjust to it, will bring a new generation of game to the consoles.

Of course we can expect more of the same for a while after launch but I think the xbox one just pips the ps4 at this stage in the race. Though I do agree Microsoft could have been a bit less corporate and just blasted out there in an informal way and told us all things in a different way. I think if you put the PS4 and Xbox One reveal next door to one another and swapped the logos you would have not been able to tell any difference between the too. Its a box it goes fast and it does stuff connected to other things.

Training in a virtual hospital + zombies

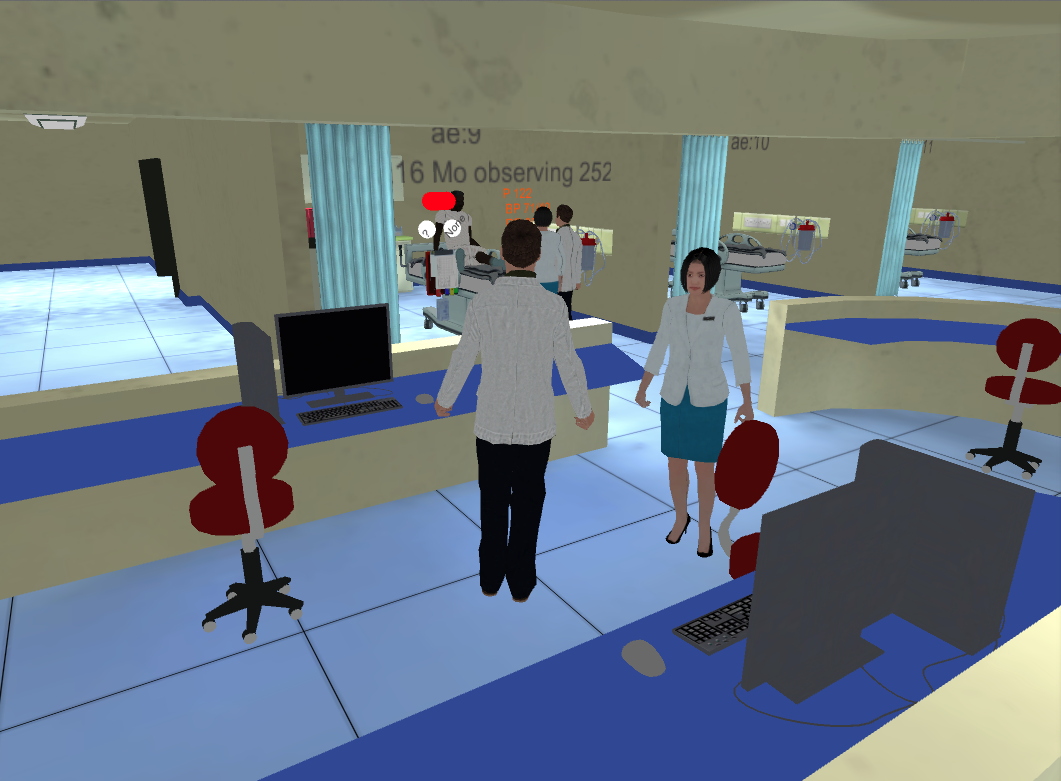

It is not very often I get to write in much detail about some of the work that I do as often it is within other projects and not always for public consumption. My recent MMIS (Multidisciplinary Major Incident Simulator) work for Imperial College London and in particular for Dave Taylor is something I am able to talk about. I have know Dave for a long while through our early interactions in Second Life when i was at my previous company being a Metaverse Evangelist and he was at NPL. Since then we have worked together on a number of projects, along with the very talented artist and 3d Modeller Robin Winter. If you do a little digging you will find Robin has been a major builder of some of the most influential places in Second life.

Our brief was for this project was to create a training simulation to deal with a massive influx patients to an already nearly full hospital. The aim being several people running different areas of the hospital have to work together to make space and move patients around to deal with the influx of new patients. It is about the human cooperation and following protocol to reach as good an answer as possible. We also had a design principle/joke of “No Zombies”

Much of this sort of simulation happens around a desk at the moment, in a more role play D&D type of fashion. That sort of approach offers a lot of flexibility to change the scenario, to throw in new things. In moving to a n online virtual environment version of the same simulation activity we did not want to loose that flexibility.

Initially we prototyped the whole thing in Second Life. Robin built a two floor hospital and created the atmosphere and visual triggers that participants would expect in a real environment.

Note this already moves on from sitting around a table focussing on the task and starts to place it in context. However also something to note is that the environment and creation of it can become a distraction from the learning and training objective. It is a constant balance between real modelling, game style information and finding the right triggers to immerse people.

For me the challenge was how to manage an underlying data model of patients in beds in places, of inbound patients and a simple enough interface to allow bed management to be run by people in world. An added complication was that of specific timers and delays needing to be in place. Each patient may take more of less time to be moved depending on their current treatment. So you need to be able to request a patient is moved but you then may have to wait a while until the bed is free. Incoming patients to a bed also have a certain time to be examined and dealt with before they can then be possibly moved again.

A more traditional object orientated approach might be for each patient to hold their own data and timings but I decided to centralise the data model for simplicity. The master controller in world decided who needed to change where and sends a message to any interested party to do what they need to do. That meant the master controller held the data for the various timers on each patient and acted as the state machine for the patients.

In order to have complete flexibility of hospital layout too I made sure that each hospital bay was not a fixed point. This meant dynamically creating patients and beds and equipment at the correct point in space in world. I used the concept of a spawn point. Uniquely identified bay names placed as spawn points around the hospital meant we could add and removes bays and change the hospital layout without changing any code. Making this as data driven as possible. Multiple scenarios could then be defined with different bay layouts and hospital structure, with different types of patients and time pressures, again without changing code. The ultimate aim was to be able to generate a modular hospital based on the needs of the scenario. We stuck to the basics though, of a fixed environment (as it was easy to move walls and rooms manually in Second Life, with dynamic bays that rezzed things in place.

This meant I could actually build the entire thing in an abstract way on my own plot of land, also as a backup.

I love to use shapes to indicate the function of something in context. The controller is the box in this picture. The wedge shape is the data. They are close to one another physically. The torus are the various bays and beds. The flat planes represent the white board. They are grouped in order and in place. You can be in the code. Can think about object responsibility through shape and space. It may not work for everyone but it does for me. The controller has a lot of code in it that also has a more traditional structure. One day it would be nice to be able to see and organise that in a similar way.

This created a challenge in Second Life as there is only actually 64k of memory available to each script. Here I had a script dealing with a variable number of patients, around 50 or so. Each patient needed data for several timer states and some identification and description text. Timers in Second Life are a 1 per script sort of structure so instead I had to use a timer loop to update all the individual timers and check for timeouts on each patient. Making the code nice a readable with lots of helper functions proved to not be the ideal way forward. The overhead of tidyness was more bytes in the stack getting eaten up. So gradually the code was hacked back to being inline operations of common functions. I also had to initiate a lookup in a separate script object for the longer pieces of text, and ultimately yet another to match patients to models.

The prototype enabled the customers (doctors and surgeons) to role play the entire thing through and helped highlight some changes that we needed to make.

The most notable was that of indicating to people what was going on. The atmosphere of pressure of the situation is obviously key. Initially the arriving patients to the hospital were sent directly to the ward or zone that was indicated on the scenario configuration. This meant I had to write a way to find the next available / free bed in a zone. This also has to be generic enough to deal with however many beds have been defined in the dynamic hospital. Patients arrived, neatly assigned to various beds. Of course as a user of the system this was confusing. Who had arrived where. Teleporting patients into bays is not what normally happens. To try and help I added non real work indicators, lights over beds etc that meant a across a ward could show new patients that needed to be dealt with.

If a patient arrived automatically but there was no bed they were places on one of two beds in the corridor. A sort of visual priority queue. That was a good mechanism to indicate overload and pressure for the exercise. However we were left with the problem of what happened when that queue was full. The patients had become active and arrived in the simulation but had nowhere to go. This of course in game terms is a massive failure, so I treated it as such and held the patients in an invisible error ward but put out a big chat message saying this patient needed dealing with.

I felt this was too clunky to have to walk around the ward keeping an eye out so as I had a generic messaging system that told patients and beds where to be I was able to make more than one object respond to a state change. This led to a quick build of a notice board in the ward. At a glance red, green and yellow status on beds could be seen. Still I was not convinced this was the right place for that sort of game style pressure. It needed a different admissions process once that was controlled by the ward owners. They would need to be able to still say “yes bring them in to the next available bed (so my space finding code would still be work)” or direct a patient to a bed.

The overal bed management process once a patient was “in” the hospital

The prototype led to the build of the real thing. It was a natural path of migration to Unity3d as I knew we could efficiently build the same thing in a way we could then simply use web browsers to access the hospital. I also knew that using Exit Games Photon Server I could get client applications talking to one another in in synch. From a software engineering point of view I knew that in C# I could create the right data structures and underlying model to be able to replicate the Second Life version but in a much better code structure. It also meant I could initiate a lot more user interface elements more simply as this was a dedicated client for this application. HUD’s work in Second Life for many things but ultimately you are trying to not make things happen, you don’t want people building or moving things etc. In a fixed and dedicated unity client you can focus on the task. Of course Second Life already had a chat system and voice so there was clearly a lot of extra things to build in Unity, but there is more than one way to skin a virtual cat.

The basic hospital and patient bed spawning points connected via Photon in Unity was actually quite quick to put together, but as ever the devil is in the detail. Second Life is server based application that clients connect too. In Unity you tend to have one of the clients as a server, or you have each client responsible for something and let the others take a ghosted synchronisation. Or a mixture as I ended up with. Shared network code is complicated. Understanding who has control and responsibility for something, when it is distributed across multiple clients takes a bit of thought and attention.

The easiest one is the player character. All the Unity and Photon examples work pretty much the same way. Using the Photon Toolkit you can instantiate a Unity object on a client and have that client as the owner. You then have the a parameters or data that you want to synchronise defined in a fairly simple interface class. The code for objects has two modes that you cater for. The first is being owned and moved around, the other is being a ghosted object owned by someone else just receiving serialised messages about what to do. There are also RPC calls that can be made asking objects on other clients to do something. This is the basis for everything shared across clients.

For the hospital though I needed a large control structure that defined the state of all the patients and things that happened to them. It made sense to have the master controller built into the master client. In Unity and photon the player that initiates a game and allows connection of others is the master. Helpfully there are data properties you can query to find this sort of thing out. So you end up with lots of code that is “if master do x else do y”.

Whoever initiates the scenario then becomes the admin for these simulations. This became a helpful differentiation. I was able to provide some overseeing functions, some start, stop pause functions only to the admin. This was something that was a bit trickier in SL but just became a natural direction in Unity/Photon.

One of my favourite admin functions was to just turn off all the walls just for the admin. Every other client is able to still see the walls but the admin has super powers and can see what is going on everywhere.

This is a harder concept for people to take in when they are used to Second Life or have a real world model in their minds. Each client can see or act on the data however it chooses. What you see in one place does not have to be an identical view to others. Sometimes that fact is used to cheat in games, removing collision detection or being able to see through walls. However here is it a useful feature.

This formed the start of an idea for some more non real world admin functions to help monitor what is going on such as cameras textures that let you see things elsewhere. As an example wards can be looked at as top down 2d or more like a cctv camera seeing in 3d. Ideally the admin is really detached from the process. They need to see the mechanics of how people interact, not always be restricted to the physical space. Participants however need to be restricted in what they can see and do in order to add the elements of stress and confusion that the simulation is aiming for.

Unity gave me a chance to redesign the patient arrival process. Patients did not just arrive in the bays but instead I put up a simple window of arrivals. Patient numbers and where there we supposed be be heading. This seemed to help, though a very simple technique, in a general awareness for all participants that things were happening. Suddenly 10-15 entries arriving in quick seemingly at the door to the hospital triggers more awareness than lights turned on in and around beds. The lights and indicators were still there as we still needed to show new patients and ones that were moving. When a patient was admitted to a bed the I put in the option to specify a bed or to just say next available one. In order to know where the patient had gone the observation phase is now additionally indicated by a group of staff around the patient. I had some grand plans using the full version of Unity Pro to use the path finding code to have non player character (NPC) staff dash towards the beds and to have people walking to and fro around the hospital for atmosphere. This turned out to be a bit to much of a performance hit for lower spec machines, though it is back on the wish list. It was fascinating seeing how the pathfinding operated. You are able to define buffer zones around walls and indicated what can be climbed or what needs to be avoided. You can then tell an object an end point and off it goes dynamically recalculating paths avoiding things and dealing with collision, giving doors enough room and if you do it right (or wrong) leaping over desks beds and patients to get to where they need to go 🙂 )

One of the biggest challenges was that of voice. Clearly people needed to communicate, that was the purpose of the exercise. I used a voice package that attempted to package messages across the network using photon. This was not spatial voice in the same way people were used to with Second Life. However I made some modifications as I already had roles for people in the simulation. If you were in charge of A&E I had to know that. So role selection became an extra feature not used in SL where it was implied. This meant I could alter the voice code to deal with channels. A&E could hear A&E. Also the admin was able to act as a tannoy and be heard by everyone. This also then started to double up as a phone system. A&E could call the Operating Theatre channel and request they take a patient. Initially this was a push to talk system. I made the mistake of changing it to an open mic. That meant every noise or sound made was constantly sent to every client, and the channel implementation meant the code chose to ignore things not meant for it. This turned out to be (obviously) massively swamp the Photon server when we had all out users online. So that needs a bit of work!

Another horrible gotcha was that I needed to log data. Who did what when was important for the research. As this was in Unity I was able to create those logging structures and their context. However because we were in a web browser I was not able to write to the file system. So a next best solution was to have a logging window for the admin that they could at least cut and paste all the log from. (This was to avoid having to set up another web server and send the logs to it over http as that was added overhead to the project to manage). I create the log data and a simple text window that all the data was pumped to. It scrolled and you could get an overview and also simply cut and paste. I worked out the size was not going to break any data limits or so I thought. However in practice this text window stopped showing anything after about a few thousand entries. Something I had not tested far enough. It turns out that text is treated the same as any other vertex based object and there are limits to the number of vertices and object can have. So it stopped being able to draw the text, even though lots of it was scrolled off screen. It meant the definition of the object had become too big. i.e. this isn’t like a web text window. It makes sense but it did catch me out as I was thinking it was “just text”.

An interesting twist was the generic noticeboard that gave an overview of the dynamic patients in the dynamic wards. This became a bigger requirement than the quick extra helper in Second Life. As a real hospital would have a whiteboard, with patient summary and various notes attached to it, then it made sense to build one. This meant that you would be able to take some notes about a patient, or indicate they needed looking at or had been seen. It sounds straight forward but the note taking turned out to be a little more complicated. Bear in mind this is a networked application multiple people can have the same noticeboard open, yet it is controlled by the master client. Typing in notes needed to be updated in the model and changes sent to others. Yes it turned out I was rewriting google docs ! I did not go quite that far but did have to indicate if someone had edited the things you were editing too.

We had some interesting things relating to the visuals too. Robin had made a set of patients or various sizes, shapes and gender. However with 50 patients or so, (as there can be any number defined) and each one described in text “a 75 year old lady” etc it meant it was very tricky to have all the variants that were going to be needed. I had taken the view that it would have been better to have generic morph style characters in the beds to avoid content bloat. The problem with “real” characters is they have to match the text (that’s editorial control), and also you need more than one of each type. If you have a ward full of 75 year old ladies and there are only 4 models it is a massive uncanny valley hit. The problem then balloons when you start building injuries like amputations into the equation. Very quickly something that is about bed management can become about details of a virtual patient. IN a fully integrated simulation of medical practice and hospital management that can happen, but the core of this project was the pressure of beds. i.e. in air traffic control terms we needed to land the planes, the type of plane was less important (though had a relevance still)

It is always easy to lose sight of the core learning or game objective with the details of what can be achieved in virtual environments. There is a time and cost to more content, to more code. However I think we managed to get a good balance with the release we have, and now can work on the tweaks to tidy it up and make it even better.

The application has also been of interest to schools. We had it on the Imperial College stand at the Bang education festival. I had to make an offline version of this. I was hoping to simply use Unity’s publish offline web version. This is supposed to remove the need to have any network connection or plugin validation. It never worked properly for me though. It always needed network. I am not sure if anyone else is having that problem, but don’t rely on it. That meant I then had to build standalone versions for mac and windows. Not a huge step but an extra set of targets to keep in line. I also had to hack the code a bit. Photon is good at doing “offline” and ignoring some of the elements but I was relying on a few things like how I generated the room name to help identify the scenario. In offline mode the room name is ignored and a generic name is put in place. Again quite understandable but cause me a a bit of offline rework that I probably could have avoided.

In order to make it a bit more accessible Dave wrote a new scenario with some funnier ailments. This is where were broke our base design principle and yes we put zombies in. I had the excellent Mixamo models and a free Gangnam style dance animation. Well it would have been silly not to put them in. So in the demos is the kids started to drift off the “special” button could get pushed and there they were.

I have shared a bit of what it takes to build one of these environments. It has got easier, but that means the difficult things have hidden themselves elsewhere.

If you need to know more about this and similar projects application the official page for it is here

Obviously if you need something like this built, or talked about to people let me know 🙂

Rez Day again – Reflection on 7 years of joy and pain

All the joys of trying to move physical home and my focus on training in Choi Kwang Do, plus the Predlets being on holiday meant my Rez Day in Second Life nearly passed me by! It has been just over 7 years now since diving into SL and that has been a catalyst for a great number of changes and opportunities (and quote a few threats) in my life and the lives of many of my friends and colleagues.

I am still amazed at the power of what happened back in 2006 the power of people to gather and share in a virtual space. The creativity and buzz is something that many of us will never fully experience again.

This may seem crazy but this formed part of a customer briefing on security!

We really didn’t know the potential(which is still there), we just knew that exploring code and shapes, interactions with people etc was going to take us somewhere. I mean what do you do when your scripted space hopper rolls away into someone else space?

There are not many pieces of software that you can look back on and say that of. Of course the software, the networks etc was just an enabler for people to communicate and explore.

I have a collection photos of real events that happened in world. These are as memorable to me as any other photo or holiday snap. Real people doing real things, just mediated through bits and bytes.

I am still amazed though at the fear and negative vibes that many of us endured, and in some cases still do from the actions we took online with one another. It is hard to see why, when something is actually so positive it needs people to act against it. Not act against Second Life but against the freeform organisation of others. I doubt anyone who as experienced this will ever be able to fully share the full details of their particular lows. Many are deeply personal. Those acting to destroy such a positive wave of energy know full well what they were doing, who knows they may think they have won some fairground prize. In reality they have lost something and probably strengthened something and in a way done us all a favour.

What do we take as a positive from that though? Well anything that generates that much passion, both for and against it is not just another fad, another niche. It is obviously tqping into some deep needs in humans to either communicate and share, to gather together, or on the other side of the virtual coin to control and break things that they do not understand.

Unfortunately as it was the people not the software that was the problem, it was the people’s potential that got attacked not the bits and bytes.

2006-2009 was a technology bubble for virtual worlds, it was also a cultural bubble. We had to go through that and experience the joy and pain of it all in order to be here for the next wave. Culture takes a while to change but we are seeing much more sharing, much more open source. A realisation the power is in people not organisational structures. You can have a balance. You can have rank, a meritocracy. You can have rules but yet creative freedom within them.

What happened for a small portion of us in Virtual Worlds back then called eightbar was that we all worked to improve our own understanding of what the potential was, but in doing that we wanted to help others who were on the same journey. It was not about money or power. It was not about glory or control. That is hard for many people to understand who were not feeling the buzz.

I am now able to reflect on what we did instinctively for the good of the art so to speak but seeing how the martial art I study works. This does not feel a tenuous link as the conversations we have resonate with 7 years ago for me.

A martial art is a meritocracy. Through your personal goals and willingness to better yourself at your own level your earn belts. In Choi Kwang Do this is not through beating people or competing. It is not through negative comments of how badly you are doing, how much you missed a target. Instead it is active encouragement to enjoy mistakes to evolve and reach a goal. Lining up in belt rank order is never to say those with the higher belt are better. They are more experienced but they still learn, they still add to their skills. This is where it may lose some people though. We have control. A person, usually with lots of experience, will run a session. They will give commands, set tasks. We do them. It may seem regimented, yet each person is aiming to improve their technique, to learn and evolve. The person in charge at the time is also improving their own knowledge through observation, helping others with positive pointers. People step up to lead and are allowed to lead because they have earned the respect of everyone. However they never, ever apply any ego to that.

If I was to head back to 2006 and the wave of awesome virtual world discoveries, the teamwork and the sense of adventure we all had I am pretty sure I would do it all again. For me now, having seen another very positive gathering of humans trying to explore something exciting, yet applying some structure, I may have been able to help us be something a little stronger to deal with those less enlightened individuals. Maybe even to help them achieve more. The martial art is focussed on defence against a threatening force though. With out the potential for threats, without a counter to all this positivity it would not need to exist. So maybe we just enjoyed such a productive and interesting time because were were up against some people who were not so interesting or productive 🙂

This year I still did some work in Second Life too. I was part of a build of a hospital experience that was about dealing with a mass influx of patients. The doctors and hospital staff (real ones) had to decide which patient to move where to make space for the sudden influx. There was a lot of code I had to design and put in place to be able to make patients deteriorate, take time to move from one place to another. Deal with multiple decisions, provide visual feedback etc. We did all this in order to help lock down the requirements. Then went on to build a web based version networked with voice too in Unity3d. We have then used it to inspire some kids too, we even added a few friendly dancing zombies. Kids love zombies. It is a fine line between playing and being serious. Virtual worlds and games bounce across that line, twist it, warp it and sometimes rub it out all together. Still they can be used for so many reasons and why wouldn’t you?

A busy week of sharing ideas

This week has been a very busy one of sharing ideas with all sorts of people in all sorts of places. It started Monday with the BCS Animation and Games Specialist group AGM, with a bit of the usual admin, making sure we are all happy with who is on the committee and inviting a new member to the fold. Then I got to do another presentation. As I had already done my Blended Learning with games one a few weeks before I talked about how close we might be to a holodeck. Mostly based on my article in Flush Magazine on the subject Afterwards though the discussion with some of the game development students turned into a tremendous ideas jam session. It was a very cool moment.

Tuesday I did a repeat visit (after 2 years) to BCS Birmingham for the evening and gave the ever evolving talk on getting tech into TV land for kids. I have to de-video the pitch and pop it onto slideshare as I use footage from The Cool Stuff Collective and I don’t have the media rights to put those bits up online. Something I am always asked about why? I don’t have a good answer as it is out of my hands.

Wednesday was a quick trip to Silicon Roundabout to talk funding and games and startups. Getting off the tube at Old Street and walking to ozone for a coffee based meeting felt like stepping into a very vibrant hub of startup and tech activity. Which it is of course.

Thursday was an early start and off to London for the BCS members convention. It was good to catch up with a lot of people I have met over the years through this professional body. It was very refreshing to see a definite fresh approach by the BCS with some great presentations, lots of tweeting and some interesting initiatives.

The most important was the work the BCS Academy has done (along with industry) to get Computer Science recognised by the department of education as the fourth science in the new syllabus. They really are going to start teaching programming from 5 years up! Result!

The day had already started well when I was honoured to receive an appreciation award from the BCS for the work I do as chair of Animation and Games. As I had done two talks the two days before this was very timely 🙂

Friday was a trip to help on the Imperial College London stand at the massive education science fair The Big Bang Exhibition.

Excel was buzzing with thousands of visitors.

There were tech zones, biology zones, 3d printers, thrust sec and even an entire maker zone.

The robot Rubiks Cube solver was there in all its glory too.

Space was well represented with real NASA astronauts chatting and drawing quite a crowd.

The Imperial College London stand was based around the medical side of things and on the corner of that stand was a Feeding Edge Ltd production 🙂

There will be a proper post on this but here is a link to the official line on the Multidisciplinary Major Incident Simulator

This unity3d and photon cloud solution (that I wrote the code for) allows for staff to practice a kind of air traffic control situation of dealing with a massive influx of patients. Having to shuffle beds and decide who goes where.

It was great being able to show this to a younger generation at the exhibition. Many came to the stand because they were interested in medicine. However a few came over to talk tech 🙂 They wanted to be programmers and build games. We had some good chats about minecraft too. Many of them appreciated the zombies that we threw into the configurable scenarios too :). If just a few % of the people who came to the fair get excited about science it will be worth it. It seemed though there was a new vibrant interest in all sciences and it is not as gloomy as it may appear sometimes.

Now it’s time to get my Dobok on and go and celebrate the opening of Hampshires first full time Choi Kwang Do facility and help get more people interested in the wonders of Choi. Phew!