As I am looking at a series of boiled down use cases of using virtual world and gaming technology I thought I should return to the exploration of body instrumentation and the potential for feedback in learning a martial art such as Choi Kwang Do.

I have of course written about this potential before, but I have built a few little extra things into the example using a new windows machine with a decent amount of power (HP Envy 17″) and the Kinect for Windows sensor with the Kinect SDK and Unity 3d package.

The package comes with a set of tools that let you generate a block man based on the the join positions. However the controller piece of code base some options for turning on the user map and skeleton lines.

In this example I am also using unity pro which allows me to position more than one camera and have each of those generate a texture on another surface.

You will see the main block man appear centrally “in world”. The three screens above him are showing a side view of the same block man, a rear view and interestingly a top down view.

In the bottom right is the “me” with lines drawn on. The kinect does the job of cutting out the background. So all this was recorded live running Unity3d.

The registration of the block man and the joints isn’t quite accurate enough at the moment for precise Choi movements, but this is the old Kinect, the new Kinect 2.0 will no doubt be much much better as well as being able to register your heart rate.

The cut out “me” is a useful feature but you can only have that projected onto the flat camera surface, it is not a thing that can be looked at from left/right etc. The block man though is actual 3d objects in space. The cubes are coloured so that you can see join rotation.

I think I will reduce the size of the joints and try and draw objects between them to give him a similar definition to the cutout “me”.

The point here though is that game technology and virtual world technology is able to give a different perspective of a real world interaction. Seeing techniques from above may prove useful, and is not something that can easily be observed in class. If that applies to Choi Kwang Do then it applies to all other forms of real world data. Seeing from another angle, exploring and rendering in different ways can yield insights.

It also is data that can be captured and replayed, transmitted and experienced at distance by others. Capture, translate, enhance and share. It is something to think about? What different perspectives could you gain of data you have access to?

blendedreality

A simple virtual world use case – learning by being there

With my metaverse evangelist hat on I have for many years, in presentations and conversations, tried to help people understand the value of using game style technology in a virtual environment. The reasons have not changed, they have grown, but a basic use case is one of being able to experience something, to know where something is or how to get to it before you actually have too. The following is not to show off any 3d modelling expertise, I am a programmer who can use most of the tool sets. I put this “place” together mainly to figure out Blender to help the predlets build in things other than minecraft.With new windows laptop, complementing the MBP, I thought I would document this use case by example.

Part 1 – Verbal Directions

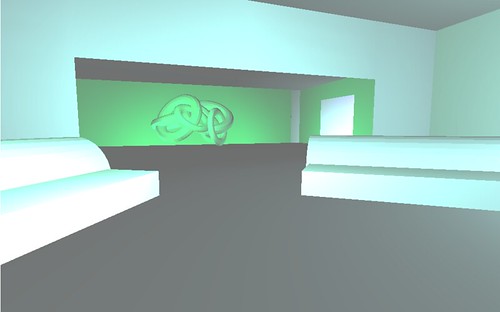

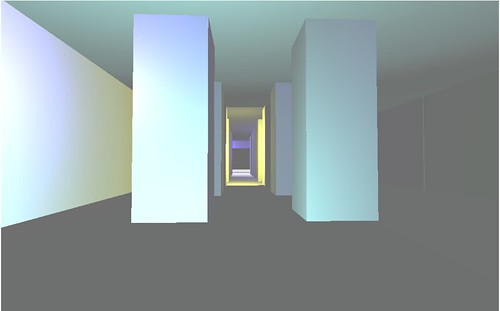

Imagine you have to find something, in this case a statue of a monkey’s head. It is in a nice apartment. The lounge area has a couple of sofas leading to a work of art in the next room. Take a right from there and a large number of columns lead to an ante room containing the artefact.

What I have done there is describe a path to something. It is a reasonable description, and it is quite a simple navigation task..

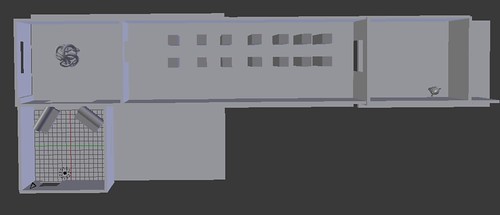

Now lets move from words, or verbal description of placement to a map view. This is the common one we have had for years. Top down.

Part 2 – The Map

A typical map, you will start from the bottom left. It is pretty obvious where to go, 2 rooms up and turn right and keep going and you are there. This augments the verbal description, or can work just on its own. Simple, and quite effective but filters a lot of the world out in simplification. Mainly because maps are easy to draw. it requires a cognitive leap to translate to the actual place.

Part 3 – Photos

You may have often seen pictures of places to give you a feel for them. They work too. People can relate to the visuals, but it is a case of you get what you are given.

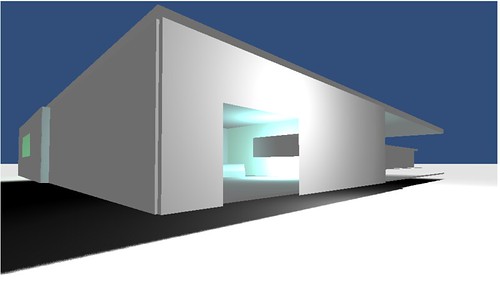

The entrance

The lounge

The columned corridor

The goal.

Again in a short example this allows us to get quite a lot of place information into the description. “A picture paints a thousand words”. It is still passive.

A video of a walkthrough would of course be an extra step here, that is more pictures one after the other. Again though it is directed.You have no choice how to learn, how to take in the place.

Part 4 – The virtual

Models can very easily now be put into tools like Unity3d and published to the web to be able to be walked around. If you click here, you should get a unity3d page and after a quick download (assuming you have the plugin 😉 if not get it !) you will be placed at the entrance to the model, which is really a 3d sketch not a full on high end photo realistic rendering. You may need to click to give it focus before walking around. It is not a shared networked place, it is not really a metaverse, but it has become easier than ever to network such models and places if sharing is an important part of the use case (such as in the hospital incident simulator I have been working on)

The mouse will look around, and ‘w’ will walk you the way you are facing (s is backwards a,d side to side). Take a stroll in and out down to the monkey and back.

I suggest that now you have a much better sense of the place, the size, the space, the odd lighting. The columns are close together you may have bumped into a few things. You may linger on the work of art. All of this tiny differences are putting this place into you memory. Of course finding this monkey is not the most important task you will have today, but apply the principle to anything you have to remember, conceptual or physical. Choosing your way through such a model or concept is simple but much more effective isn’t it? You will remember it longer and maybe discover something else on the way. It is not directed by anyone, your speed your choice. This allows self reflection in the learning process which re-enforces understanding of the place

Now imagine this model, made properly, nice textures and lighting, a photo realistic place and pop on a VR headset like the Oculus Rift. Which in this case is very simple with Unity3d. You sense on being there is even further enhanced and only takes a few minutes.

It is an obvious technology isn’t it? A virtual place to rehearse and explore.

Of course you may have spotted that this virtual place whilst in unity3d to walk around provided the output for the map and for the photo navigation. Once you have a virtual place you can still do things the old way if that works for you. Its a Virtual virtuous circle!

Dear BBC I am a programmer and a presenter let me help

I was very pleased to see that the Tony Hall the new DG of the BBC wants to get the nation coding. He plans to “bring coding into every home, business and school in the UK”. http://www.bbc.co.uk/news/technology-24446046

So I thought, as I am lacking a full time agent in the TV world, I should throw my virtual hat in the ring to offer to work on the new programme that the BBC has planned for 2015.

It is not the first time I have offered assistance to such an endeavour, but this is the most public affirmation of it happening.

So why me? Well I am a programmer and have been since the early days of the shows on TV back in zx81/c64/bbc model a/b/spectrum days. I was initially self taught through listings in magazine and general tinkering before studying to a degree level, and then pursuing what has been a very varied career generally involving new tech each step of the way.

I was lucky enough to get a TV break with Archie Productions and the ITV/CITV show The Cool Stuff Collective, well documented on this blog 😉 In that I had an emerging technology strand of my own. The producers and I worked together to craft the slot, but most of it was driven by things that I spend my time sharing with C-level executives and at conferences about the changing world and maker culture.

It was interesting getting the open source arduino, along with some code on screen in just a few short minutes. It became obvious there was a lot more that could be done to help people learn to code. Of course these days we have many more ways to interact too. We do not have to just stick to watching what is on screen, that acts as a hub for the experience. Code, graphics, art work, running programs etc can all be shared across the web and social media. User participation, live and in synch with on-demand can be very influential. Collections of ever improving assets can be made available then examples of how to combine them put on TV.

We can do so much with open source virtual worlds, powerful accessible tools like Unity 3d and of course platforms like the Raspberry Pi. We can also have a chance to explore the creativity and technical challenges of user generated content in games. Next gen equipment like the Oculus rift. Extensions to the physical world with 3d printers, augmented reality and increasingly blended reality offer scope for innovation and invention by the next generation of technical experts and artists. Coding and programming is just the start.

I would love to help, it is such an important a worthy cause for public engagement.

Here is a showreel of some of the work.

There is more here and some writing and conference lists here

So if you happen to read this and need some help on the show get in touch. If you are an agent and want to help get this sort of thing going then get in touch. If you know someone who knows someone then pass this on.

This blog is my CV, though I do have a traditional few pages if anyone needs it.

Feeding Edge, taking a bite out of technology so you don’t have to.

Yours Ian Hughes/epredator

CGI people and horses, People as CGI

Ye si know some of us of a certain web age thill think CGI is “Common Gateway Interface” and gets us this thinking in Perl and about $POST and $GET but CGI is now commonly known as Computer Generate Imagery. Thinks have certainly changed over the last few years with respect to what can actually be generated and just how good it has got.

Last night I saw the new Galaxy chocolate ad. Normally if there is some CGI my brain goes into how did they do that mode, travelling around the uncanny valley. However this ad I watched and thought wow that actress looks just like Audrey Hepburn. I did a quick google to find that it was Audrey Hepburn the CGI version. Now watching it again I can see that it is CGI, though not all the time.

The other ad doing the rounds that is obviously CGI, unless horses really can moonwalk is 3’s dancing pony. Strangely it is the real life bits that jar with me on this. The close in on what are model hooves is jarring compared to the smoothness of the rest of it.

It is nice to see 3 doing a pony dance mixer as a youtube application. It is a lot more Monty Python than slick CGI but its worth a few moments to have a go 🙂 Mine is here

What I saw today though whilst researching more about Holodecks was this great twist on the sort of building projection projects that animate entire cityscapes. Here the project canvas is a person. This is really brilliant I think. Lots of the images certainly stick with you after watching it. It works by scanning the person (who then sits very still) the scan informs the projector how to deliver and map the textures onto the surface. The same way designers use a 3d application and create a UV map of the textures and even baked lighting.

This is more blended reality at work 🙂 It is similar to how the Kinect performs some visual trickery on screen (not yet via projection but maybe soon) in things like Kinect Party