I missed watching the Xbox One live reveal video last night. Generally these overly rehearsed American corporate tele prompter fed things are pretty long, drawn out and less than optimal experiences. So I went to Choi instead obviously!

I came back to see what the generally vibe was and it seemed quite negative. Partly because of a focus on TV and web integration not on games, and where it was about games it was Call of Duty and a new dog character. However having watched the replay there were some gems of information in there that I found interesting.

The first was “The Kinect understands the rotation of your wrists and shoulders and can even read your heartbeat.” It seems the new Kinect 2.0 is a much more advanced device. A wide angle field of view and a HD image of the world. Whilst the focus seemed to be “look how good skype video calls will be” there were some images of the new kinect skeleton and tracking. It looks fast, assuming it was live. It also showed some fast combat moves as well, combined with some subtle yoga moves. All looking great if we can get at the tech for things like Choi.

**Update added the wired preview of all the kinect abilities 🙂

The multiple references to cloud were generally about storage, as is often the case. However there was a reference to the processing in the cloud that can occur. i.e. servers. “bigger matches with more players, living and persistent worlds”. So we have the chance for some real persistent virtual worlds and games to exist on the console in a well managed way. These exist generally in PC land with Second Life, Eve-online and World of Warcraft. There have been the odd foray into proper MMO territory on consoles, such as the new Defiance tie in, and playstation Home. Now though it looks like this, assuming the games companies can adjust to it, will bring a new generation of game to the consoles.

Of course we can expect more of the same for a while after launch but I think the xbox one just pips the ps4 at this stage in the race. Though I do agree Microsoft could have been a bit less corporate and just blasted out there in an informal way and told us all things in a different way. I think if you put the PS4 and Xbox One reveal next door to one another and swapped the logos you would have not been able to tell any difference between the too. Its a box it goes fast and it does stuff connected to other things.

future

Almost a re-launch here we go

After what has seemed an age we have finally moved family home (and of course the base for Feeding Edge). It has meant a lot of down time work wise. Packing shifting, unpacking all takes real time out of the day. The biggest problem had been a lack of internet. This is somewhat essential for an online business! At the previous place we had superfast broadband with BT Infinity FTTC (Fibre to the cabinet) about 70 Mbps. When we moved house I checked the local exchange and it seemed to be Infinity enabled. In putting in a house move request though it was not possible for BT to determine if they could re-do infinity until the phone line was enabled, but could give 2-8Mbps broadband. It was also going to take 2 weeks to enable the line, i.e. after we moved.So I took the off the net time to sort out all things house related.

![]()

On wednesday the ASDL router lit up and data was once again able to flow, albeit at 5Mps to all the various machines. I was a bit surprised that the phone didn’t light up too. As it was now enabled I thought I would phone BT and check what was going on. The helpdesk was adamant that I did not have internet enabled as the phone line was not connected yet. They insisted that I must be picking up a neighbours wifi not an ADSL line. I was not overly worried about that but I was attached to ADSL I was looking at the router admin pages, the light was on and we were on my wifi network and I had looked up the number to call and the status page using that internet.

All I can assume that happened was a very kind engineer had been trying to sort out the phone and broadband, there was clearly a tech fault with some element of the phone number but they patched in broadband (which is actually more use that a phone line these days). The BT checker says we can’t get Infinity yet, though does say it will be available May 2013. As it is May 2013 I really hope we get hooked up soon. It is very hard to go backwards in capacity and it is certainly going to slow me down a little.

Either way the phone got fixed and by Thursday this death star was fully operational (well on reduced power really).

Friday I got to try the new quicker route to London and went to talk about some more medical related training development building from MMIS.

So Monday, today is the first proper day of the new, but the same Feeding Edge. I am still here taking a bite out of technology so you don’t have to. The digital doors are open and let’s see what this part of the adventure brings us.

Already though next month (June) is looking pretty busy.

I am speaking (along with the wonderful Lisa Feay ) and attending the Irish Symposium on Game Based Learning

I am also heading to Amsterdam to talk Blended Reality Learning at the GOTO festival

So it’s all go, all the same, yet brand new 🙂

Rez Day again – Reflection on 7 years of joy and pain

All the joys of trying to move physical home and my focus on training in Choi Kwang Do, plus the Predlets being on holiday meant my Rez Day in Second Life nearly passed me by! It has been just over 7 years now since diving into SL and that has been a catalyst for a great number of changes and opportunities (and quote a few threats) in my life and the lives of many of my friends and colleagues.

I am still amazed at the power of what happened back in 2006 the power of people to gather and share in a virtual space. The creativity and buzz is something that many of us will never fully experience again.

This may seem crazy but this formed part of a customer briefing on security!

We really didn’t know the potential(which is still there), we just knew that exploring code and shapes, interactions with people etc was going to take us somewhere. I mean what do you do when your scripted space hopper rolls away into someone else space?

There are not many pieces of software that you can look back on and say that of. Of course the software, the networks etc was just an enabler for people to communicate and explore.

I have a collection photos of real events that happened in world. These are as memorable to me as any other photo or holiday snap. Real people doing real things, just mediated through bits and bytes.

I am still amazed though at the fear and negative vibes that many of us endured, and in some cases still do from the actions we took online with one another. It is hard to see why, when something is actually so positive it needs people to act against it. Not act against Second Life but against the freeform organisation of others. I doubt anyone who as experienced this will ever be able to fully share the full details of their particular lows. Many are deeply personal. Those acting to destroy such a positive wave of energy know full well what they were doing, who knows they may think they have won some fairground prize. In reality they have lost something and probably strengthened something and in a way done us all a favour.

What do we take as a positive from that though? Well anything that generates that much passion, both for and against it is not just another fad, another niche. It is obviously tqping into some deep needs in humans to either communicate and share, to gather together, or on the other side of the virtual coin to control and break things that they do not understand.

Unfortunately as it was the people not the software that was the problem, it was the people’s potential that got attacked not the bits and bytes.

2006-2009 was a technology bubble for virtual worlds, it was also a cultural bubble. We had to go through that and experience the joy and pain of it all in order to be here for the next wave. Culture takes a while to change but we are seeing much more sharing, much more open source. A realisation the power is in people not organisational structures. You can have a balance. You can have rank, a meritocracy. You can have rules but yet creative freedom within them.

What happened for a small portion of us in Virtual Worlds back then called eightbar was that we all worked to improve our own understanding of what the potential was, but in doing that we wanted to help others who were on the same journey. It was not about money or power. It was not about glory or control. That is hard for many people to understand who were not feeling the buzz.

I am now able to reflect on what we did instinctively for the good of the art so to speak but seeing how the martial art I study works. This does not feel a tenuous link as the conversations we have resonate with 7 years ago for me.

A martial art is a meritocracy. Through your personal goals and willingness to better yourself at your own level your earn belts. In Choi Kwang Do this is not through beating people or competing. It is not through negative comments of how badly you are doing, how much you missed a target. Instead it is active encouragement to enjoy mistakes to evolve and reach a goal. Lining up in belt rank order is never to say those with the higher belt are better. They are more experienced but they still learn, they still add to their skills. This is where it may lose some people though. We have control. A person, usually with lots of experience, will run a session. They will give commands, set tasks. We do them. It may seem regimented, yet each person is aiming to improve their technique, to learn and evolve. The person in charge at the time is also improving their own knowledge through observation, helping others with positive pointers. People step up to lead and are allowed to lead because they have earned the respect of everyone. However they never, ever apply any ego to that.

If I was to head back to 2006 and the wave of awesome virtual world discoveries, the teamwork and the sense of adventure we all had I am pretty sure I would do it all again. For me now, having seen another very positive gathering of humans trying to explore something exciting, yet applying some structure, I may have been able to help us be something a little stronger to deal with those less enlightened individuals. Maybe even to help them achieve more. The martial art is focussed on defence against a threatening force though. With out the potential for threats, without a counter to all this positivity it would not need to exist. So maybe we just enjoyed such a productive and interesting time because were were up against some people who were not so interesting or productive 🙂

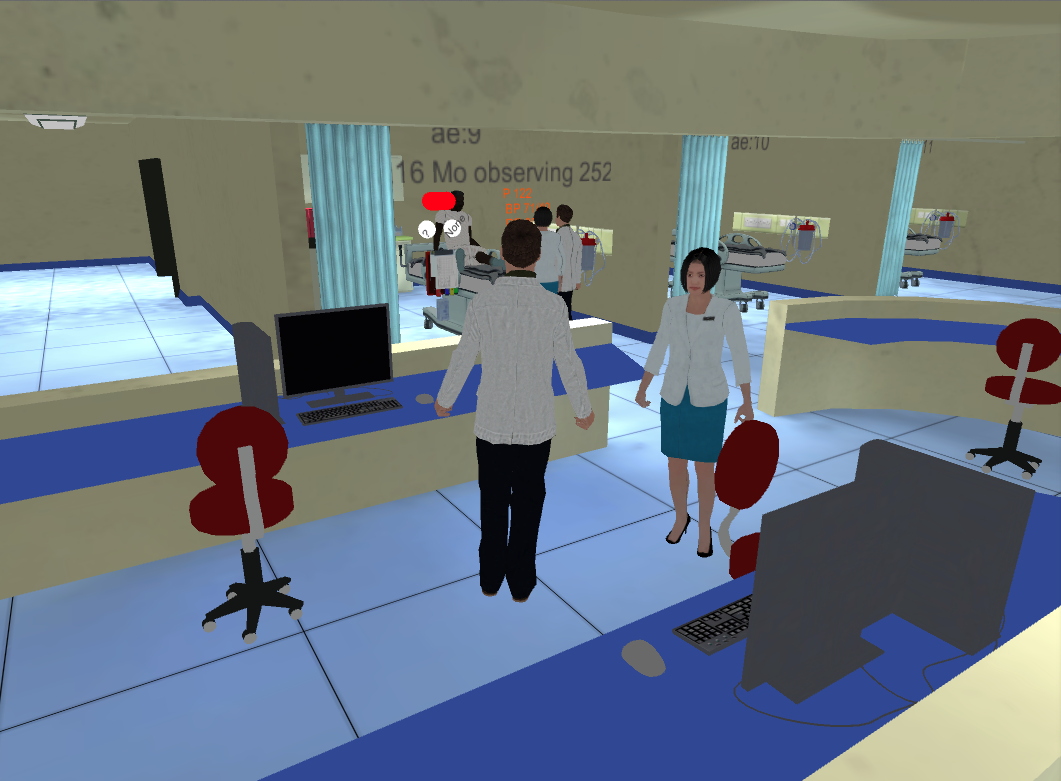

This year I still did some work in Second Life too. I was part of a build of a hospital experience that was about dealing with a mass influx of patients. The doctors and hospital staff (real ones) had to decide which patient to move where to make space for the sudden influx. There was a lot of code I had to design and put in place to be able to make patients deteriorate, take time to move from one place to another. Deal with multiple decisions, provide visual feedback etc. We did all this in order to help lock down the requirements. Then went on to build a web based version networked with voice too in Unity3d. We have then used it to inspire some kids too, we even added a few friendly dancing zombies. Kids love zombies. It is a fine line between playing and being serious. Virtual worlds and games bounce across that line, twist it, warp it and sometimes rub it out all together. Still they can be used for so many reasons and why wouldn’t you?

Battling robots – an interesting future?

I am not sure how many people remember Rock em Sock em and all their variants. Here is a version of the original advert for them.

There were small figures connected to mechanical rods with thumb press buttons. You could move them back and forth and side to side with the aim to punch and hit the chin of the other bot, causing their head to pop up into the air.

I did not have this version, but I did have a human shaped boxing set. They were more free moving not stuck in a ring and had a counter of how many hits they had taken.

All in all they were great fun.

Which is why I know I would love to have a go on the Robot Combat League. I just happened to bump into this channel hoping. I initially thought it was Robot Wars. Which had arena combat between very differing radio controlled wheeled vehicles carrying big hammers and circular saws.

It turned out to be something different. A giant game of Rockem Sockem.

In Robot Combat league a team of 2 people control each robot device. One moves back forward and side to side. The other wears a rig that takes their arm movements to make the bot punch.

The movement around is a bit odd as the robot is not really free standing. The legs are fairly cosmetic as the bot is held of a large frame. The punching then is based on timing and targeting. Each bot is designed to look different and have a different style to it, but they are all rigged with sensors for pyrotechnics so lots of sparks fly when a good hit is made. That was all good but could obviously get a bit samey. It then seemed that the robots were actually able to damage one another as one bot swung in the arm of another, broke a hydraulic line and the robot was disabled and left dripping robot blood onto the arena floor for the cheering and jeering audience.

All very exciting blended reality fighting.

This is of course what happens in the film Real Steel. Which is one potential future of combat to consider.

The fact these are just engineered puppets, just like the toy of the 60’s, put a human element into the fight. It is people wanting to win against other people. I couldn’t help but wonder what would happen if we were able to have a fully free standing robot able to accept all the subtleties of human movement and how we would be able to use those in Choi Kwang Do as a training aid 🙂 Of course there is one problem to consider though in mirroring human movement. If a person makes contact with something or someone during a technique that technique and where they land and what comes next flows from the situation. The return impact is important. Punching into the air means that after contact you and the robot are no longer in synch. Your fist follows through, theirs stops on the armour of the other one. Now giving you haptic feedback to get you to feel what has happened would be a good start, but of course then you will be shoved around pushed etc so why have the robot at all 😉

The real excitement will be to have suitable fighting intelligence. One that can free form fight against another bot. Then of course we find ourselves approaching Asimov’s territory and robot rights. Is it ok to watch robots destroy one another as opposed to remote control machines.

There is no doubt though this phase of robot combat is an interesting one to watch. I am just of to get the welding kit out 🙂

Or we could just build it in minecraft – look at this 🙂

Gadget Show Live 2013

Yesterday I made what has become an annual pilgrimage to the NEC in Birmingham for the press and preview day of The Gadget Show Live. Initially we started to go to this for The Cool Stuff Collective as a reccy for the show. Now though it is just out of interest for all things tech.

The show has a lot of Televisions and Wireless speakers and a massive Windows stand too. There are also the regulars of Game, lots of manufacturers of cases for iPhones etc.

However there are always some more interesting corners to explore. This year there were more 3d printers than usual, some great real holography and some very cool very fast RC cars. I quick video, shot and edited up solely on the Iphone 5 has some the hightlights.

This 3d printed guitar body was cool.

A water powered battery

Sphero a ball that you drive around with your ipad

Some great collectibles

A brand new Star Trek game (which looked fantastic)

The Igloo with a sensor based gun to give 360 degree gaming (I would like one of these please!)

Some gadgets to help you fly out of the water and the crazy bouncy spring shoes too.

So a whole bunch of stuff. It only took a couple of hours to see everything, but I got there early and the halls were not as packed as they will be on public days.

Still a lot of interesting stuff even when you live on this sort of information 24/7 🙂

A busy week of sharing ideas

This week has been a very busy one of sharing ideas with all sorts of people in all sorts of places. It started Monday with the BCS Animation and Games Specialist group AGM, with a bit of the usual admin, making sure we are all happy with who is on the committee and inviting a new member to the fold. Then I got to do another presentation. As I had already done my Blended Learning with games one a few weeks before I talked about how close we might be to a holodeck. Mostly based on my article in Flush Magazine on the subject Afterwards though the discussion with some of the game development students turned into a tremendous ideas jam session. It was a very cool moment.

Tuesday I did a repeat visit (after 2 years) to BCS Birmingham for the evening and gave the ever evolving talk on getting tech into TV land for kids. I have to de-video the pitch and pop it onto slideshare as I use footage from The Cool Stuff Collective and I don’t have the media rights to put those bits up online. Something I am always asked about why? I don’t have a good answer as it is out of my hands.

Wednesday was a quick trip to Silicon Roundabout to talk funding and games and startups. Getting off the tube at Old Street and walking to ozone for a coffee based meeting felt like stepping into a very vibrant hub of startup and tech activity. Which it is of course.

Thursday was an early start and off to London for the BCS members convention. It was good to catch up with a lot of people I have met over the years through this professional body. It was very refreshing to see a definite fresh approach by the BCS with some great presentations, lots of tweeting and some interesting initiatives.

The most important was the work the BCS Academy has done (along with industry) to get Computer Science recognised by the department of education as the fourth science in the new syllabus. They really are going to start teaching programming from 5 years up! Result!

The day had already started well when I was honoured to receive an appreciation award from the BCS for the work I do as chair of Animation and Games. As I had done two talks the two days before this was very timely 🙂

Friday was a trip to help on the Imperial College London stand at the massive education science fair The Big Bang Exhibition.

Excel was buzzing with thousands of visitors.

There were tech zones, biology zones, 3d printers, thrust sec and even an entire maker zone.

The robot Rubiks Cube solver was there in all its glory too.

Space was well represented with real NASA astronauts chatting and drawing quite a crowd.

The Imperial College London stand was based around the medical side of things and on the corner of that stand was a Feeding Edge Ltd production 🙂

There will be a proper post on this but here is a link to the official line on the Multidisciplinary Major Incident Simulator

This unity3d and photon cloud solution (that I wrote the code for) allows for staff to practice a kind of air traffic control situation of dealing with a massive influx of patients. Having to shuffle beds and decide who goes where.

It was great being able to show this to a younger generation at the exhibition. Many came to the stand because they were interested in medicine. However a few came over to talk tech 🙂 They wanted to be programmers and build games. We had some good chats about minecraft too. Many of them appreciated the zombies that we threw into the configurable scenarios too :). If just a few % of the people who came to the fair get excited about science it will be worth it. It seemed though there was a new vibrant interest in all sciences and it is not as gloomy as it may appear sometimes.

Now it’s time to get my Dobok on and go and celebrate the opening of Hampshires first full time Choi Kwang Do facility and help get more people interested in the wonders of Choi. Phew!

Palm reading, well finger pointing actually

Props to @andypiper for tweeting this to me today. Whilst I had my virtual self in a unity3d build I had not had a surf around for a few days. Andy sent me an article about Myo a gesture control armband. It is currently available for preorder but it appears to be a way of determining muscle movement in the hand and lower arm to act as a blended reality controller. It will be a simpler version of the technology used in the latest prosthetic arms (I am assuming).

What is does do is free you from a camera based approach which means you can gesture anywhere. That becomes relevant if the thing you are controlling is not a computer screen but another active device. Their website shows an ARDrone.

It mixes sensing electrical muscle signals and general motion sensing, connects via bluetooth. It looks like they are providing an API and toolkit for Windows and Mac from day 1 🙂

I am very tempted to pre-order but as we have an impending house (and business) move keeping track of what I have on long term order and changing things is enough of a knightmare already. If only I could just wave my arm and solve it all… hey wait a minute…..

Here comes the PS4

Last night many of us watched on Ustream as Sony unveiled the PS4. As I tweeted at the time it was great to see Ustream in such a high profile event as we streamed a Second Life and real world interview at Wimbledon back in 2007. Just a few of us with portable cameras and a willingness to try something different.

Sony had a pretty impressive stage set up. There was a very large main screen, but they went for a CAVE type approach and around the screen and the side walls formed part of the experience.It was a bit like Microsoft’s Illumiroom ?

There was a massive influx of tweets when the first person on said “over the next 2 hours we will…..” and shouts of get on with it please!

Anyway, they did get on with it. The PS4 is pretty much a ramped up general purpose computer again. It has significant extra memory and additional processors to do some of the extra tasks. One of the things they mentioned was that it would be able to download patches and updates in the background. i.e. unlike the PS3 which sits and downloads patches not allowing you do anything else. Of course this really should be what they do. As part of this they announced being able to play games whilst they were downloading. So this is down to the design process to give you the bits you need at the start, not just a loading screen game as we used to see in the 8 bit days.

The new controller was unveiled, it is pretty much the old controller with a touch pad. A significant feature was a big light on the front. The said this was to help identify one another’s controller more easily. That was not really true as it was then announced a dual camera sensor would ship with the unit and it would be able to see the controllers. i.e. they stuck the glowing PS Move lights into the controllers 🙂 We will have to see what benefit that gives.

Sharing and connecting featured heavily. Being able to access content anywhere and targeted content. “A dynamic preference driven path through the world of content” apparently! The PS Vita was often mentioned too as the perfect way to enjoy streamed content when the main TV could not be used. Remote play.

Then there was cloud streaming. It had to feature as Sony had bought Gaiki It is a core of the social experience too it would seem. So if something is on the PS Store you can play it straight away, no need to download (or pay) making any game have a free trial period. As network speeds increase this makes a great deal of sense. Of course it then does make you wonder why you need a massively powerful console device if rendering is done remotely. However the best of both worlds can’t hurt can it.

The PS4 controller now gets a share button. A physical share button rather then the web page ones of Facebook and alike. This lets you share whatever you are doing to whoever in all the various permutations. They have put a dedicated video compression processor on board that basically encodes the last n minutes (I think n is 15) of whatever you are doing. You hit share and it throws the video to the world. This is to cut out all editing apparently. I hope it does not remove replay options in games as being able to recreate a moment from data and view it from anywhere is still great fun. Everyone replaying the same in game view of the same thing may get a little… samey.

Other than the PS Vita all the other second screens got a mention. So we will have to see how designers use these.

**Updated I almost forgot (it was a long show) they extended the social aspect and the remote play so that you can hand over the reigns of a game to someone somewhere else in the world. It is a bit like when one of the predlets ask me to do a bit of a level for them and hand me a controller. Whether many gamers want to hand over control is another matter. I am reminded of a patent idea @martinjgale and I did years ago at the old company. It was one that detected your level of inability to complete a level, or frustration and patched in remotely a guide or helper to get you past your moments of despair and enjoy the rest of the game. It was a great idea but somehow it didn’t get through the review process as they regard it as silly. Well….. 🙂

The main UI looked very Xbox 360, with the current trend for huge great video icons laid out in Mondrial style patterns, some animated. Primarily so that our clumsy touch interfaces can target them. Still it looked nice.

Then we were treated to a whole host of games, probably in game footage, but where cut scenes end and game begins is not alway clear. We did though get straight into first person shooting and then first person driving. Great genres, now shinier and smoother. Not a massive leap in game design though.

So I am sure the PS4 will be great, I am sure when they finally release it I will have to add to the console collection. The test will be if it has great content that is worth all this sharing. That of course is down to the games industry. They have a new box ready to do massive triple A titles. As long as they don’t forget the innovation that brings us awesome experiences like Minecraft! which the talking heads video seems to indicate 🙂

Here are the games though

Building an electric superbike – Flush issue 6

The new edition of Flush magazine has just gone live and it features a little departure from my normal format with an interview I did with my friend Mike Edwards (@asanyfuleno) about his fascinating project gathering people together to build a revolutionary new electric racing superbike. It starts at page 105 (this is a direct link to it)

There as loads of other interesting articles in the magazine as usual.

I am really pleased we got this done as we had talked about it for ages. It would be great to be able to get a behind the scenes documentary going about this project too. Mike is also taking a crowdsourcing route as we mention in the article to get the project stages moving. This is all hot of the presses so look out for his helpusinnovate.com and if you happen to be intrigued or can help then I am sure Mike would love to hear from you 🙂

The importance of solving some of the challenges for a race bike will have a direct knock on to commercial everyday use. I have been pondering an electric car replacement for the shorter journeys when we finally move house in the next few weeks. I was suprised at how few options there were and still a heavy early adopter premium to consider. One major manufacturers car looked less expensive with its on the road price much lower than others, it then turned out you have to rent the battery and pay a monthly fee. So there is definitely some need for some innovation in the electric vehicle business to make it work for people.

Hopefully Mike’s project will make for some very exciting racing and I look forward to seeing an awesome independent bike take on the factory machines (petrol and electric)

Four years of Feeding Edge six years of tweets

This month marks the 4th year of Feeding Edge Ltd (est. 2009) and it has been interesting to be able to just get to the Twitter archives for my @epredator account and look back at some of the things that have happened.

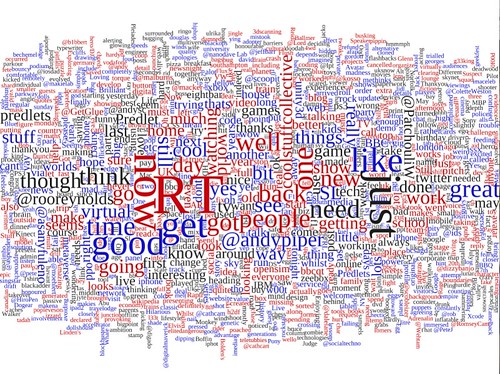

I thought in celebration I would generate a Tag Cloud using processing and WordCram it took a bit of massaging of memory levels as there are a lot of words 🙂

This seems very representative of most of the time I have had Feeding Edge up and running. It is after all 2/3 of the tweets time wise and I am probably a more prolific tweeter (and Retweeter as RT is the biggest word 🙂 ) now than ever.

I obviously have talked about the Predlets a lot, and talked to some particular people more than others. It is interesting that in general the words look positive as I try not to be too negative, even back when things were very difficult.

So happy birthday Feeding Edge 🙂 right back to the Unity3d development then 🙂